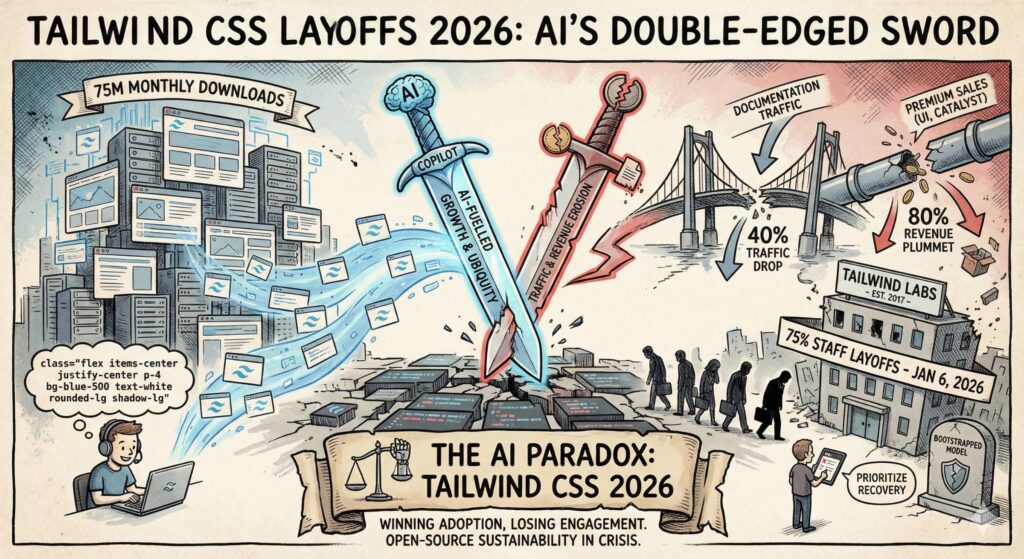

TLDR: Tailwind Labs, creators of the popular Tailwind CSS framework, laid off 75% of its engineering team on January 6, 2026, due to AI-driven disruptions. While AI boosted Tailwind’s popularity with 75 million monthly downloads, it slashed documentation traffic by 40% and revenue by 80%, as developers rely on AI tools like GitHub Copilot instead of visiting the site. This “AI paradox” highlights vulnerabilities in open-source business models, sparking community debates on sustainability and future adaptations.

Key Takeaways

- Tailwind CSS’s explosive growth is fueled by AI coding agents generating its code by default, leading to ubiquity in modern web development but bypassing traditional learning and monetization channels.

- Documentation site traffic dropped 40% since early 2023, crippling upsells for premium products like Tailwind UI and Catalyst, as AI handles queries without site visits.

- Revenue plummeted 80%, forcing drastic layoffs in the bootstrapped company, with no venture backing to cushion the blow.

- The announcement came via a GitHub PR comment, going viral on X, Hacker News, and Reddit, eliciting sympathy, irony, and calls for pivots or acquisitions.

- Broader implications include risks for other doc-heavy tools, reduced deep learning among developers, and acceleration of open-source commoditization by AI.

- Potential futures: Short-term focus on maintenance, long-term shifts to AI-integrated products, partnerships, or new revenue streams like subscriptions.

Detailed Summary

Tailwind CSS, launched in 2017 by Adam Wathan and Steve Schoger, revolutionized web development with its utility-first approach. Developers apply classes directly in HTML for rapid UI building, integrating seamlessly with frameworks like React and Next.js. Tailwind Labs monetizes through premium offerings while keeping the core framework open-source and free.

The crisis unfolded on January 6, 2026, when Wathan announced in a GitHub pull request that 75% of the engineering team was laid off. The PR proposed an “AGENTS.md” file for guiding LLMs to generate Tailwind code optimally. Wathan rejected it, citing the need to prioritize business recovery over community features.

In his comment, Wathan explained: Traffic to tailwindcss.com fell 40% despite rising popularity, as AI tools like Copilot and Claude output Tailwind code without users needing docs. This site was crucial for promoting paid products, leading to an 80% revenue drop. Contributor Michael Sears warned of potential “abandonware” without sustainable funding.

The news exploded online. On X (formerly Twitter), posts like one from @ybhrdwj amassed thousands of likes, highlighting the irony. Discussions on Hacker News (over 465 comments) and Reddit’s r/theprimeagen debated AI’s commoditization of knowledge. Media outlets like DevClass and OfficeChai framed it as a warning for traffic-reliant businesses.

Community reactions mixed shock with suggestions: Pivot like avoiding Kodak’s fate, shame Big Tech for non-contribution, or pursue acquisitions by firms like Vercel or Anthropic.

Some Thoughts on the AI Paradox and Open-Source Future

This situation exemplifies AI’s disruptive power—boosting adoption while eroding foundations. Tailwind “won” by becoming AI’s default CSS choice but lost human engagement essential for monetization. It’s a wake-up call for bootstrapped startups: Relying on organic traffic is precarious when AI answers queries instantly.

For developers, AI enhances productivity but risks shallower skills, potentially flooding codebases with unvetted “junk.” Hiring may favor those who can curate AI outputs effectively.

Open-source sustainability feels more fragile; premium add-ons falter as AI replicates value for free. Alternatives like enterprise support or AI partnerships could emerge. Tailwind’s resilience lies in its community—if it adapts to AI-native tools, it could thrive. Otherwise, it risks fading, underscoring that in 2026, AI reshapes value chains relentlessly.