Microsoft CEO Satya Nadella sat down with Stripe co-founder John Collison on the Cheeky Pint podcast in November 2025 for a wide-ranging, candid conversation about enterprise AI diffusion, data sovereignty, the durability of Excel, agentic commerce, and why today’s AI infrastructure build-out is fundamentally different from the 2000 dot-com bust.

TL;DW – The 2-Minute Version

- AI is finally delivering “information at your fingertips” inside enterprises via Copilot + the Microsoft Graph

- This CapEx cycle is supply-constrained, not demand-constrained – unlike the dark fiber of the dot-com era

- Excel remains unbeatable because it is the world’s most approachable programming environment

- Future of commerce = “agentic commerce” – Stripe + Microsoft are building the rails together

- Company sovereignty in the AI age = your own continually-learning foundation model + memory + tools + entitlements

- Satya “wanders the virtual corridors” of Teams channels instead of physical offices

- Microsoft is deliberately open and modular again – echoing its 1980s DNA

Key Takeaways

- Enterprise AI adoption is the fastest Microsoft has ever seen, but still early – most companies haven’t connected their full data graph yet

- Data plumbing is finally happening because LLMs can make sense of messy, unstructured reality (not rigid schemas)

- The killer app is “Deep Research inside the corporation” – Copilot on your full Microsoft 365 + ERP graph

- We are in a supply-constrained GPU/power/shell boom, not a utilization bubble

- Future UI = IDE-style “mission control” for thousands of agents (macro delegation + micro steering)

- Agentic commerce will dominate discovery and directed search; only recurring staples remain untouched

- Consumers will be loyal to AI brands/ensembles, not raw model IDs – defaults and trust matter hugely

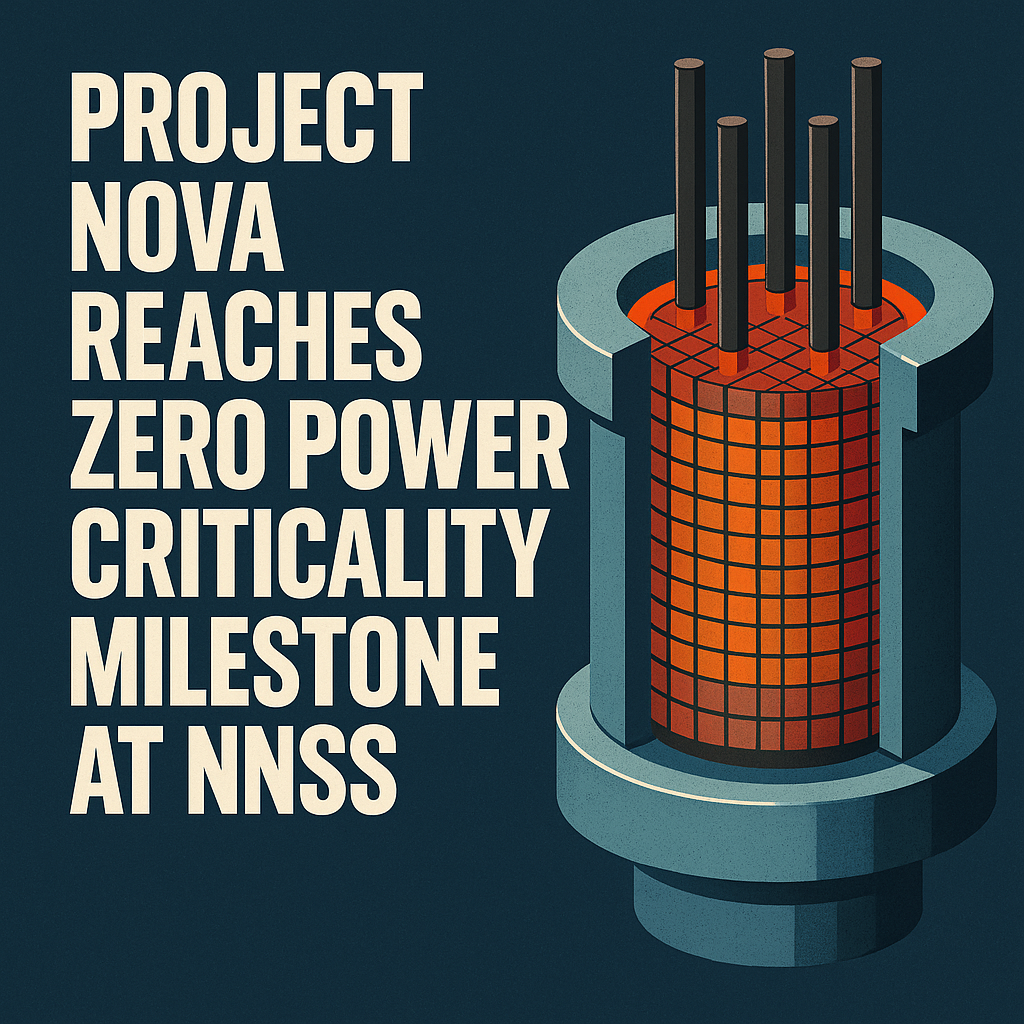

- Microsoft’s stack: Token Factory (Azure infra) → Agent Factory (Copilot Studio) → Systems of Intelligence (M365 Copilot, GitHub Copilot, Security Copilot, etc.)

- Culture lesson: don’t let external memes (e.g. the “guns pointing inward” cartoon) define internal reality

Detailed Summary

The conversation opens with Nadella’s excitement for Microsoft Ignite 2025: the focus is no longer showing off someone else’s AI demo, but helping every enterprise build its own “AI factory.” The biggest bottleneck remains organizing the data layer so intelligence can actually be applied.

Copilot’s true power comes from grounding on the Microsoft Graph (email, docs, meetings, relationships) – something most companies still under-utilize. Retrieval, governance, and thick connectors to ERP systems are finally making the decades-old dream of “all your data at your fingertips” real.

Nadella reflects on Bill Gates’ 1990s obsession with “information management” and structured data, noting that deep neural networks unexpectedly solved the messiness problem that rigid schemas never could.

On bubbles: unlike the dark fiber overbuild of 2000, today Microsoft is sold out and struggling to add capacity fast enough. Demand is proven and immediate.

On the future of work: Nadella manages by “wandering Teams channels” rather than physical halls. He stays deeply connected to startups (he visited Stripe when it was tiny) because that’s where new workloads and aesthetics are born.

UI prediction: we’re moving toward personalized, generated IDEs for every profession – think “mission control” dashboards for orchestrating thousands of agents with micro-steering.

Excel’s immortality: it’s Turing-complete, instantly malleable, and the most approachable programming environment ever created.

Agentic commerce: Stripe and Microsoft are partnering to make every catalog queryable and purchasable by agents. Discovery and directed search will move almost entirely to conversational/AI interfaces.

Company sovereignty in the AI era: the new moat is your own fine-tuned foundation model (or LoRA layer) that continually learns your tacit knowledge, combined with memory, entitlements, and tool use that stay outside the base model.

Microsoft’s AI stack strategy: deliberately modular (infra, agent platform, horizontal & vertical Copilots) so customers can enter at any layer while still benefiting from integration when they want it.

My Thoughts

Two things struck me hardest:

- Nadella is remarkably calm for someone steering a $3T+ company through the biggest platform shift in decades. There’s no triumphalism – just relentless focus on distribution inside enterprises and solving the boring data plumbing.

- He genuinely believes the proprietary vs open debate is repeating: just as AOL/MSN lost to the open web only for Google/Facebook/App Stores to become new gatekeepers, today’s “open” foundation models will quickly sprout proprietary organizing layers (chat front-ends, agent marketplaces, vertical Copilots). The power accrues to whoever builds the best ensemble + tools + memory stack, not the raw parameter count.

If he’s right, the winners of this cycle will be the companies that ship useful agents fastest – not necessarily the ones with the biggest training clusters. That’s excellent news for Stripe, Microsoft, and any founder-focused company that can move quickly.