A deep breakdown of Lex Fridman Podcast #494 featuring Jensen Huang, CEO of NVIDIA, covering extreme co-design, the four AI scaling laws, CUDA’s origin story, the future of programming, AGI timelines, and what it takes to lead the world’s most valuable company.

TLDW (Too Long, Didn’t Watch)

Jensen Huang sat down with Lex Fridman for a sprawling two-and-a-half-hour conversation covering the full arc of NVIDIA’s evolution from a GPU gaming company to the engine of the AI revolution. Jensen explains how NVIDIA now thinks in terms of rack-scale and pod-scale computing rather than individual chips, breaks down his four AI scaling laws (pre-training, post-training, test time, and agentic), and reveals the near-existential bet the company made putting CUDA on GeForce. He shares his views on China’s tech ecosystem, his deep respect for TSMC, why he turned down the chance to become TSMC’s CEO, how Elon Musk’s systems engineering approach built Colossus in record time, and why he believes AGI already exists. He also discusses why the future of programming is really about “specification,” why intelligence is being commoditized while humanity is the true superpower, and how he manages the enormous pressure of leading a company that nations and economies depend on. His core message: do not let the democratization of intelligence cause you anxiety. Instead, let it inspire you.

Key Takeaways

1. NVIDIA No Longer Thinks in Chips. It Thinks in AI Factories.

Jensen’s mental model of what NVIDIA builds has fundamentally changed. He no longer picks up a chip to represent a new product generation. Instead, his mental model is a gigawatt-scale AI factory with power generation, cooling systems, and thousands of engineers bringing it online. The unit of computing at NVIDIA has evolved from GPU to computer to cluster to AI factory. His next mental “click” is planetary-scale computing.

2. Extreme Co-Design Is NVIDIA’s Secret Weapon

The reason NVIDIA dominates is not just better GPUs. It is the extreme co-design of the entire stack: GPU, CPU, memory, networking, switching, power, cooling, storage, software, algorithms, and applications. Jensen explains that when you distribute workloads across tens of thousands of computers and want them to go a million times faster (not just 10,000 times), every single component becomes a bottleneck. This is a restatement of Amdahl’s Law at scale. NVIDIA’s organizational structure directly reflects this co-design philosophy. Jensen has 60+ direct reports, holds no one-on-ones, and runs every meeting as a collective problem-solving session where specialists across all domains are present and contribute.

3. The Four AI Scaling Laws Are a Flywheel

Jensen outlined four distinct scaling laws that form a continuous loop:

Pre-training scaling: Larger models plus more data equals smarter AI. The industry panicked when people said data was running out, but synthetic data generation has removed that ceiling. Data is now limited by compute, not by human generation.

Post-training scaling: Fine-tuning, reinforcement learning from human feedback, and curated data continue to scale AI capabilities beyond what pre-training alone achieves.

Test-time scaling: Inference is not “easy” as many predicted. It is thinking, reasoning, planning, and search. It is far more compute-intensive than memorization and pattern matching. This is why inference chips cannot be commoditized the way many predicted.

Agentic scaling: A single AI agent can spawn sub-agents, creating teams. This is like scaling a company by hiring more employees rather than trying to make one person faster. The experiences generated by agents feed back into pre-training, creating a flywheel.

4. The CUDA Bet Nearly Killed NVIDIA

Putting CUDA on GeForce was one of the most consequential technology decisions in modern history. It increased GPU costs by roughly 50%, which crushed the company’s gross margins at a time when NVIDIA was a 35% gross margin business. The company’s market cap dropped from around $7-8 billion to approximately $1.5 billion. But Jensen understood that install base defines a computing architecture, not elegance. He pointed to x86 as proof: a less-than-elegant architecture that defeated beautifully designed RISC alternatives because of its massive install base. CUDA on GeForce put a supercomputer in the hands of every researcher, every scientist, every student. It took a decade to recover, but that install base became the foundation of the deep learning revolution.

5. NVIDIA’s Moat Is Trust, Velocity, and Install Base

Jensen was direct about NVIDIA’s competitive advantage. The CUDA install base is the number one asset. Developers target CUDA first because it reaches hundreds of millions of computers, is in every cloud, every OEM, every country, every industry. NVIDIA ships a new architecture roughly every year. No company in history has built systems of this complexity at this cadence. And the trust that NVIDIA will maintain, improve, and optimize CUDA indefinitely is something developers can count on. If someone created “GUDA” or “TUDA” tomorrow, it would not matter. The install base, velocity of execution, ecosystem breadth, and earned trust create a compounding advantage that is nearly impossible to replicate.

6. Jensen Believes AGI Is Already Here

When asked about AGI timelines, Jensen said he believes AGI has been achieved. His reasoning is practical: an agentic system today could plausibly create a web service, achieve virality, and generate a billion dollars in revenue, even if temporarily. This is not meaningfully different from many internet-era companies that did the same thing with technology no more sophisticated than what current AI agents can produce. He does not believe 100,000 agents could build another NVIDIA, but he believes a single agent-driven viral product is within reach right now.

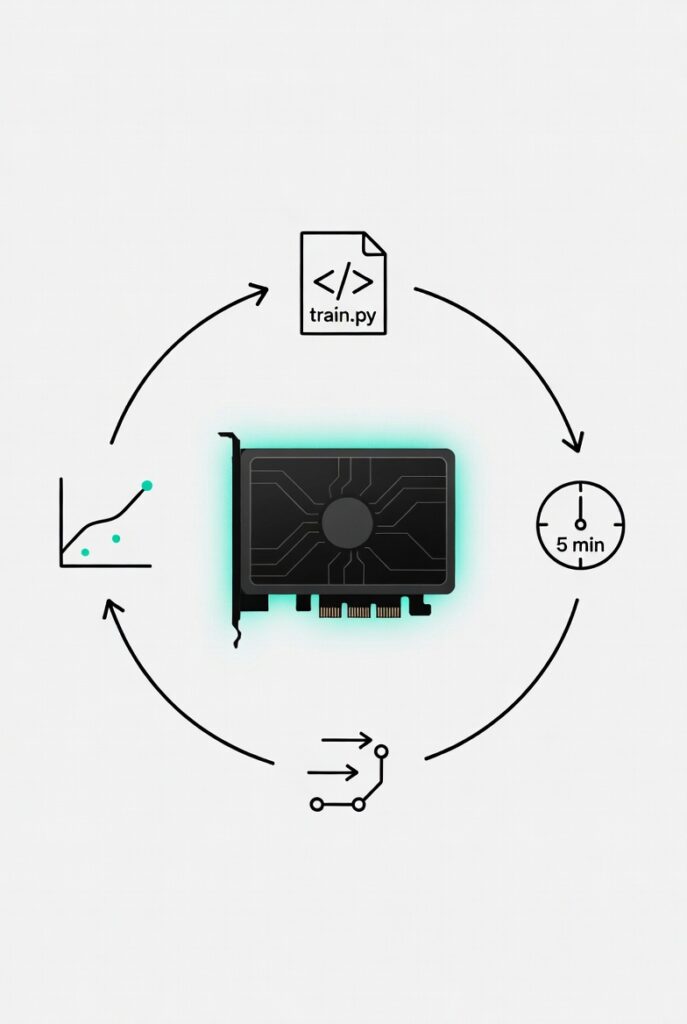

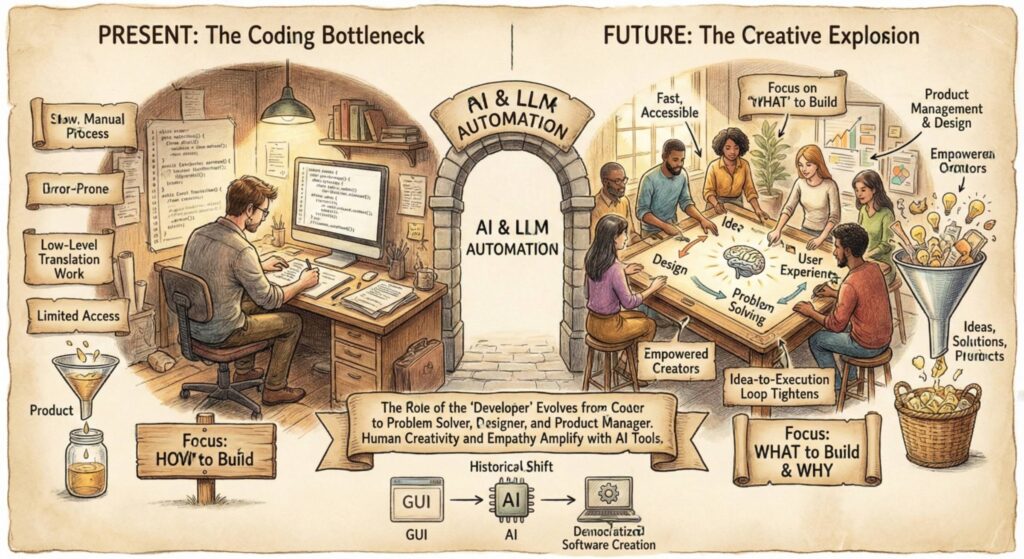

7. The Future of Programming Is Specification, Not Syntax

Jensen believes the number of programmers in the world will increase dramatically, not decrease. His reasoning: the definition of coding is expanding to include specification and architectural description in natural language. This expands the population of “coders” from roughly 30 million professional developers to potentially a billion people. Every carpenter, plumber, accountant, and farmer who can describe what they want a computer to build is now a coder. The artistry of the future is knowing where on the spectrum of specification to operate, from highly prescriptive to exploratory and open-ended.

8. China Is the Fastest Innovating Country in the World

Jensen gave a nuanced and detailed explanation of why China’s tech ecosystem is so formidable. About 50% of the world’s AI researchers are Chinese. China’s tech industry emerged during the mobile cloud era, so it was built on modern software from the start. The country’s provincial competition creates an insane internal competitive environment. And the cultural norm of knowledge-sharing through school and family networks means China effectively operates as an open-source ecosystem at all times. This is why Chinese companies contribute disproportionately to open source. Their engineers’ brothers, friends, and schoolmates work at competing companies, and sharing knowledge is the cultural default.

9. The Power Grid Has Enormous Waste That AI Can Exploit

Jensen proposed a pragmatic solution to the energy problem for AI data centers. Power grids are designed for worst-case conditions with margin, but 99% of the time they run at around 60% of peak capacity. That idle capacity is simply wasted. Jensen wants data centers to negotiate flexible contracts where they absorb excess power most of the time and gracefully degrade during rare peak demand periods. This requires three things: customers accepting that “six nines” uptime may not always be necessary, data centers that can dynamically shift workloads, and utilities that offer tiered power delivery contracts instead of all-or-nothing commitments.

10. Jensen Turned Down the CEO Role at TSMC

In 2013, TSMC founder Morris Chang offered Jensen the chance to become CEO of TSMC. Jensen confirmed the story is true and said he was deeply honored. But he had already envisioned what NVIDIA could become and felt it was his sole responsibility to make that vision happen. He sees the relationship with TSMC as one built on three decades of trust, hundreds of billions of dollars in business, and zero formal contracts.

11. Elon Musk’s Systems Engineering Approach Is Instructive

Jensen praised Elon Musk’s approach to building the Colossus supercomputer in Memphis in just four months. He highlighted several principles: Elon questions everything relentlessly, strips every process down to the minimum necessary, is physically present at the point of action, and his personal urgency creates urgency in every supplier. Jensen drew a parallel to NVIDIA’s own “speed of light” methodology, where every process is benchmarked against the physical limits of what is possible, not against historical baselines.

12. Intelligence Is a Commodity. Humanity Is Not.

Perhaps the most philosophical takeaway from the conversation: Jensen argued that intelligence is a functional, measurable thing that is being commoditized. He surrounded himself with 60 direct reports who are all “superhuman” in their respective domains, more educated and deeper in their specialties than he is. Yet he sits in the middle orchestrating all of them. This proves that intelligence alone does not determine success. Character, compassion, grit, determination, tolerance for embarrassment, and the ability to endure suffering are the real differentiators. Jensen wants the audience to understand that the word we should elevate is not intelligence but humanity.

Detailed Summary

From GPU Maker to AI Infrastructure Company

The conversation opened with Jensen explaining NVIDIA’s evolution from chip-scale to rack-scale to pod-scale design. The Vera Rubin pod, announced at GTC, contains seven chip types, five purpose-built rack types, 40 racks, 1.2 quadrillion transistors, nearly 20,000 NVIDIA dies, over 1,100 Rubin GPUs, 60 exaflops of compute, and 10 petabytes per second of scale bandwidth. And that is just one pod. NVIDIA plans to produce roughly 200 of these pods per week.

Jensen explained that extreme co-design is necessary because the problems AI must solve no longer fit inside a single computer. When you distribute a workload across 10,000 computers but want a million-fold speedup, everything becomes a bottleneck: computation, networking, switching, memory, power, cooling. This is fundamentally an Amdahl’s Law problem at planetary scale. If computation represents only 50% of the workload, speeding it up infinitely only doubles total throughput. Every layer must be co-optimized simultaneously.

NVIDIA’s organizational structure is a direct reflection of this co-design philosophy. Jensen has more than 60 direct reports, almost all with deep engineering expertise. He does not do one-on-ones. Every meeting is a collective problem-solving session where the memory expert, the networking expert, the cooling expert, and the power delivery expert are all in the room together, attacking the same problem.

The Strategic History of CUDA

Jensen walked through the step-by-step journey from graphics accelerator to computing platform. The company invented a programmable pixel shader, then added IEEE-compatible FP32 to its shaders, then put C on top of that (called Cg), and eventually arrived at CUDA. The critical strategic decision was putting CUDA on GeForce, a consumer product.

This was nearly an existential move. It increased GPU costs by roughly 50% and consumed all of the company’s gross profit at a time when NVIDIA was a 35% gross margin business. The market cap cratered from around $7-8 billion to approximately $1.5 billion. But Jensen understood a principle that many technologists overlook: install base defines a computing architecture. x86 survived not because it was elegant but because it was everywhere. CUDA on GeForce put a supercomputing capability in the hands of every gamer, every student, every researcher who built their own PC. When the deep learning revolution arrived, CUDA was already the foundation.

How Jensen Leads and Makes Decisions

Jensen described a leadership philosophy built on continuous reasoning in public. He does not make announcements in the traditional sense. Instead, he shapes the belief systems of his employees, board, partners, and the broader industry over months and years by reasoning through decisions step by step, using every new piece of external information as a brick in the foundation. By the time he formally announces a strategic direction, the reaction is not surprise but rather, “What took you so long?”

He applies this same approach to his supply chain. He personally visits CEOs of DRAM companies, packaging companies, and infrastructure providers. He explains the dynamics of the industry, shares his vision of future demand, and helps them reason through why they should make multi-billion-dollar capital investments. Three years ago, he convinced DRAM CEOs that HBM memory would become mainstream for data centers, which sounded ridiculous at the time. Those companies had record years as a result.

Jensen’s “speed of light” methodology is his framework for decision-making. Every process, every design, every cost is benchmarked against the physical limits of what is theoretically possible. He prefers this to continuous improvement, which he views as incrementalism. He would rather strip a 74-day process back to zero and ask, “If we built this from scratch today, how long would it take?” Often the answer is six days, and the remaining 68 days are filled with accumulated compromises that can be challenged individually.

AI Scaling Laws and the Future of Compute

Jensen broke down the four scaling laws in detail. The pre-training scaling law, which depends on model size and data volume, was thought to be hitting a wall when the industry worried about running out of high-quality human-generated data. Jensen argued this concern is misplaced. Synthetic data generation has effectively removed the ceiling, and the constraint is now compute, not data.

Post-training continues to scale through fine-tuning and reinforcement learning. Test-time scaling was the most counterintuitive for the industry. Many predicted that inference would be “easy” and that inference chips would be small, cheap, and commoditized. Jensen saw this as fundamentally wrong. Inference is thinking: reasoning, planning, search, decomposing novel problems into solvable pieces. Thinking is much harder than reading, and test-time compute is intensely resource-hungry.

Agentic scaling is the newest frontier. A single AI agent can spawn sub-agents, effectively multiplying intelligence the way a company scales by hiring. The experiences and data generated by agentic systems feed back into pre-training, creating a continuous improvement loop. Jensen described this as the reason NVIDIA designed the Vera Rubin rack architecture differently from the Grace Blackwell architecture. Grace Blackwell was optimized for running large language models. Vera Rubin is designed for agents, which need to access files, use tools, do research, and spin off sub-agents. NVIDIA anticipated this architectural shift two and a half years before tools like OpenClaw arrived.

China, TSMC, and the Global Supply Chain

Jensen provided a thoughtful analysis of China’s tech ecosystem. He identified several structural advantages: 50% of the world’s AI researchers are Chinese, the tech industry was born during the mobile cloud era (making it natively modern), provincial competition creates internal Darwinian pressure, and the culture of knowledge-sharing through school and family networks makes China effectively open-source by default.

On TSMC, Jensen emphasized that the deepest misunderstanding about the company is that its technology is its only advantage. Their manufacturing orchestration system, which dynamically manages the shifting demands of hundreds of companies, is “completely miraculous.” Their culture uniquely balances bleeding-edge technology excellence with world-class customer service. And the trust that Jensen places in TSMC is extraordinary: three decades of partnership, hundreds of billions of dollars in business, and no formal contract.

Jensen also discussed the AI supply chain more broadly. NVIDIA has roughly 200 suppliers contributing technology to each rack. Jensen personally manages these relationships, flying to supplier sites, explaining industry dynamics, and helping CEOs reason through multi-billion-dollar investment decisions. When asked if supply chain bottlenecks keep him up at night, he said no, because he has already communicated what NVIDIA needs, his partners have told him what they will deliver, and he believes them.

The Energy Challenge and Space Computing

On the energy front, Jensen proposed a practical approach to the power problem. Rather than waiting for new power generation, he wants to capture the enormous waste already present in the grid. Power infrastructure is designed for worst-case peak demand, but 99% of the time it runs far below capacity. AI data centers could absorb this excess capacity with flexible contracts that allow graceful degradation during rare peak periods.

On space computing, NVIDIA already has GPUs in orbit for satellite imaging. Jensen acknowledged the cooling challenge (no conduction or convection in space, only radiation) but sees it as a future frontier worth cultivating. In the meantime, he is focused on the lower-hanging fruit of eliminating waste in the terrestrial power grid.

On AGI, Jobs, and the Human Future

Jensen stated directly that he believes AGI has been achieved, at least by the practical definition of an AI system capable of creating a billion-dollar company. He sees it as plausible that an agent could build a viral web service that briefly generates enormous revenue, just as many internet-era companies did with technology no more sophisticated than what current AI agents produce.

On jobs, Jensen was both compassionate and clear-eyed. He told the story of radiology: computer vision became superhuman around 2019-2020, and the prediction was that radiologists would disappear. Instead, the number of radiologists grew because AI allowed them to study more scans, diagnose better, and serve more patients. The purpose of the job (diagnosing disease) did not change, even though the tools changed completely.

He applied this principle broadly: the number of software engineers at NVIDIA will grow, not decline, because their purpose is solving problems, not writing lines of code. The number of programmers globally will grow because the definition of coding is expanding to include natural language specification, opening it up to potentially a billion people.

His advice to anyone worried about their job is straightforward: go use AI now. Become expert in it. Every profession, from carpenter to pharmacist to lawyer, will be elevated by AI tools. The people who learn to use AI will be the ones who get hired, promoted, and empowered.

Mortality, Succession, and Legacy

The conversation closed with deeply personal reflections. Jensen said he really does not want to die. He sees the current moment as a “once in a humanity experience.” He does not believe in traditional succession planning. Instead, he believes the best succession strategy is to pass on knowledge continuously, every single day, in every meeting, as fast as possible. His hope is to die on the job, instantaneously, with no long period of suffering.

He described a vision for a kind of digital continuity: sending a humanoid robot into space, continuously improving it in flight, and eventually uploading the consciousness derived from a lifetime of communications, decisions, and reasoning to catch up with it at the speed of light.

On the emotional experience of leading NVIDIA, Jensen was candid about hitting psychological low points regularly. His coping mechanism is decomposition: break the problem into pieces, reason about what you can control, tell someone who can help, share the burden, and then deliberately forget what is behind you. He compared this to the mental discipline of great athletes who focus only on the next point.

His final message was about the relationship between intelligence and humanity. Intelligence, he argued, is functional. It is being commoditized. Humanity, character, compassion, grit, tolerance for embarrassment, and the capacity for suffering are the true superpowers. The word society should elevate is not intelligence but humanity.

Thoughts

This is one of the most substantive CEO interviews of 2026. What makes it remarkable is not just the breadth of topics but the depth of reasoning Jensen demonstrates in real time. You can actually watch him think through problems on the spot, which is rare for someone at his level.

A few things stand out. First, the CUDA origin story is one of the great strategic narratives in tech history. The decision to absorb a 50% cost increase on a consumer product, watching your market cap collapse by 80%, and holding the course for a decade because you understood the power of install base is the kind of conviction that separates generational companies from everyone else.

Second, Jensen’s framing of the four scaling laws as a flywheel is the clearest articulation anyone has given of why AI compute demand will continue to accelerate. Most people understand pre-training. Fewer understand test-time scaling. Almost nobody is thinking about agentic scaling as a compute multiplier. Jensen has been thinking about it for years and already designed hardware for it before the software ecosystem caught up.

Third, the discussion on jobs deserves attention. The radiology example is powerful because it is a completed experiment, not a prediction. The profession that was supposed to be eliminated first by AI instead grew. The mechanism is straightforward: when you automate the task, you expand the capacity of the purpose, and demand for the purpose increases. This does not mean there will be no pain or dislocation. Jensen acknowledged that explicitly. But the historical pattern is clear.

Finally, the philosophical distinction between intelligence and humanity is the kind of framing that could genuinely help people navigate the anxiety of this moment. If you define your value by your intelligence alone, AI commoditization is terrifying. If you define your value by your character, your compassion, your tolerance for suffering, and your willingness to keep going when everything goes wrong, then AI is just the most powerful set of tools you have ever been given.

Jensen Huang is 62 years old, has been running NVIDIA for 34 years, and shows no signs of slowing down. If anything, his conviction about the future is accelerating alongside his company’s growth.

Watch the full episode: Lex Fridman Podcast #494 with Jensen Huang