TLDW (Too Long, Didn’t Watch)

Amol Avasare, Head of Growth at Anthropic, sat down with Lenny Rachitsky to explain how Anthropic grew from $1 billion to over $19 billion in annual recurring revenue in just 14 months. He breaks down their internal tool called CASH (Claude Accelerates Sustainable Hypergrowth) that automates growth experimentation, why 50 to 70 percent of traditional growth playbooks are now obsolete, why the PM-to-engineer ratio may need to flip, and how Anthropic’s early bet on AI coding created a research flywheel that competitors are only now starting to copy. He also shares how he cold emailed his way into the job, why activation is the single hardest problem in AI products, and how he uses Cowork to detect team misalignment across Slack channels automatically.

Key Takeaways

1. Anthropic’s growth trajectory is historically unprecedented. Revenue went from $1 billion at the start of 2025 to over $19 billion ARR by February 2026. That 19x growth in 14 months dwarfs companies like Atlassian, Snowflake, and Palantir, which took 15 to 20 years to reach $4.5 to $6 billion ARR. The number Amol quoted was already outdated by the time the episode aired.

2. Anthropic is automating growth experimentation with an internal tool called CASH. CASH stands for Claude Accelerates Sustainable Hypergrowth. The growth platform team uses Claude to identify opportunities, build experiments (mostly copy changes and minor UI tweaks so far), test them against quality and brand standards, and analyze results. Amol describes the current win rate as roughly equivalent to a junior PM with two to three years of experience, but notes it was not possible at all before Opus 4.5 and has improved significantly with Opus 4.6. Human review is still in the loop but decreasing week over week.

3. Activation is the single highest-leverage growth problem in AI. The core challenge is capability overhang: models are improving so fast that users do not know what they can do. By the time you have tested and optimized onboarding for one model’s capabilities, the next model has already shipped with entirely new features that make your learnings obsolete. Anthropic addresses this by adding intentional friction in onboarding to understand who users are and funnel them to the right products and features.

4. Anthropic indexes 70/30 toward big bets, the opposite of most growth teams. Traditional growth teams spend 60 to 70 percent of effort on small to medium optimizations. Anthropic flips that ratio because they believe the product value delivered two years from now will be 100x to 1,000x what it is today. In that exponential environment, micro-optimizations capture a negligible percentage of future value. Large strategic bets are where the leverage lives.

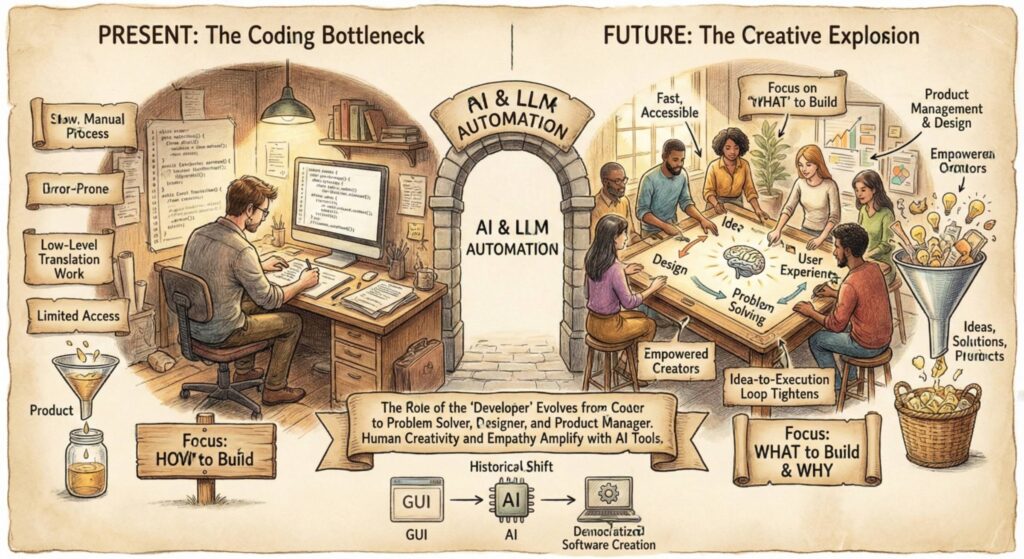

5. The PM-to-engineer ratio may need to flip. Engineers are getting 2 to 3x more productive with tools like Claude Code, effectively turning a team of 5 engineers into the equivalent of 15 to 20. But PMs and designers have not seen the same multiplier. The result is that product management and design are “absolutely squeezed.” Anthropic is responding by hiring more PMs and deputizing product-minded engineers to act as mini-PMs on projects under two weeks. The counterintuitive insight: companies may need more PMs, not fewer, as AI accelerates engineering output.

6. Cold emailing still works if you do it right. Amol got his job by cold emailing Mike Krieger, Anthropic’s Chief Product Officer (and co-founder of Instagram), at a time when no growth role was even listed. Key tactics: use a high-converting subject line you have tested over time, find personal email addresses instead of competing in crowded LinkedIn inboxes, keep the message extremely short, and follow up relentlessly until someone explicitly asks you to stop.

7. PRDs are largely obsolete at Anthropic. Amol estimates that 60 to 80 percent of what his team ships does not have a formal PRD. For small projects, coordination happens entirely in Slack. For larger initiatives, he will sometimes throw his thoughts into Cowork five minutes before a kickoff meeting to generate a rough document. His default philosophy: if you can skip the doc and jump straight to prototyping or action, do it.

8. The AI coding bet created a research flywheel. Anthropic’s deep focus on coding was not just a commercial play. A document written by co-founder Ben Mann in 2021, just months after the company was founded, laid out the case for focusing on AI coding because better coding models would accelerate their own researchers, which would produce better models, which would produce better coding tools, in a compounding loop. This is something competitors are only now starting to recognize and copy.

9. Cowork is being used to detect organizational misalignment. Amol runs a weekly scheduled task in Cowork that uses the Slack MCP to scan conversations across the company and surface areas of potential misalignment. He describes cases where this caught teams about to do overlapping work or spin their wheels on conflicting priorities. He also uses Cowork to simulate coaching sessions with his manager, Ami Vora, by asking Claude to analyze her public writing and internal Slack activity and then deliver feedback from her perspective.

10. Anthropic’s culture is its most defensible moat. Amol describes a culture where every single person is fully engaged, nobody is checked out, and there is radical transparency through “notebook channels” on Slack where anyone, including leadership, shares their thinking publicly. Employees openly challenge Dario Amodei in these channels after all-hands meetings. These notebook channels also serve a practical purpose: they become training data that helps Claude understand how different teams think and operate.

Detailed Summary

The Cold Email That Started It All

Amol Avasare was not recruited through a job listing, a referral, or a sourcing pipeline. He cold emailed Mike Krieger, Anthropic’s CPO and the co-founder of Instagram, with a short pitch: he loved the product, thought Anthropic badly needed a growth team, and wanted to talk. At the time, Anthropic had no growth roles posted. They were just beginning to think about it internally, and the timing was perfect.

Amol’s approach to cold email is methodical. He has a subject line formula he has refined over years of founder outreach that produces abnormally high open rates (he declined to share the exact copy). He targets personal email addresses rather than work inboxes or LinkedIn, where competition for attention is fierce. The message itself is brutally short: who he is, why he would be a fit, and a request to chat. His follow-up philosophy is to keep reaching out until someone tells him to stop. Krieger responded on the first attempt.

What $1B to $19B in 14 Months Actually Feels Like

From the inside, Anthropic’s growth does not feel like a victory lap. Amol describes it as the hardest job he has ever had, harder than being a founder and harder than investment banking. About 70 percent of his time goes to what the team calls “success disasters,” which are problems created by things going extremely well. All the charts are green and up and to the right, but the underlying infrastructure, processes, and systems are constantly breaking under the strain of hypergrowth.

The revenue trajectory tells the story: $0 to $100 million in 2023, $100 million to $1 billion in 2024, $1 billion to roughly $10 billion in 2025, and already $19 billion ARR by the end of February 2026. Amol notes that at the end of 2024, Dario Amodei was pushing for growth targets that the team thought were impossible. Those targets were hit and exceeded. The internal culture has adapted accordingly. Linear charts are considered uncool. Everything is presented on a log-linear scale.

Why Activation Is the Hardest Problem in AI

The central growth challenge for AI products is not acquisition. It is activation: getting users to understand what the product can actually do for them. Amol frames this as a capability overhang problem. Models are improving so rapidly that even internal teams struggle to keep up with what is newly possible. If Anthropic employees have to carve out dedicated time to explore a new model’s capabilities, the average user is even further behind.

The danger is that someone signs up for Claude, asks it about the weather, and walks away thinking that is all it does. The product development cycle for onboarding is also under strain: by the time you have run tests, gathered learnings, and shipped an optimized activation flow for one model generation, the next model has shipped with capabilities that make your work irrelevant.

Anthropic’s approach borrows from Amol’s experience at Mercury and MasterClass. They add deliberate friction to the signup flow, asking users questions about who they are and what they want to accomplish. This allows them to route users to the right products and features. The data also feeds downstream into lifecycle marketing and ad targeting. Amol has seen this pattern work consistently across every company he has worked at: the right friction, applied at the right time, outperforms frictionless flows that dump users into a blank canvas with no guidance.

The CASH System: Automating Growth Experimentation

Anthropic’s growth platform team, led by Alexey Komissarouk (who teaches growth engineering at Reforge), has built an internal system called CASH. The name stands for Claude Accelerates Sustainable Hypergrowth.

CASH operates on a four-stage loop. First, Claude identifies growth opportunities by analyzing trends, metrics, and past experiment results. Second, Claude builds the actual feature or change. Third, Claude tests the output against quality and brand standards. Fourth, Claude analyzes the results and gathers learnings after the experiment ships.

Currently, CASH handles mostly copy changes and minor UI tweaks. The win rate is comparable to a junior PM with two to three years of experience. A senior PM would still do better. But the trajectory matters: this was not possible at all before Opus 4.5 launched, and results have improved meaningfully with Opus 4.6. Human approval is still required before shipping, but the amount of human time spent reviewing is decreasing week over week.

The part that Claude still cannot handle well is cross-functional stakeholder management. Getting six people in a room to align on a decision remains a fundamentally human problem. As Amol’s head of design joked: “We will have AGI and it will still be impossible to get six people in a room to get aligned.”

Why the PM-to-Engineer Ratio Might Flip

This is one of the most counterintuitive insights from the conversation. The conventional assumption is that AI will reduce the need for PMs. Amol argues the opposite: companies may need more PMs, at least in the near term.

The math is straightforward. Tools like Claude Code are making engineers 2 to 3x more productive. A team of 5 engineers now produces the output equivalent of 15 to 20 engineers in the pre-AI era. PMs and designers have seen productivity gains, but not at the same multiplier. The result is a bottleneck: one PM managing the equivalent output of 15 to 20 engineers worth of work, while also handling cross-functional coordination, stakeholder alignment, and strategic direction.

Anthropic’s response is twofold. First, they are actively hiring more PMs. Second, they have formalized a system where product-minded engineers act as mini-PMs on any project that is two engineering weeks or less. The engineer handles everything: talking to legal, talking to security, managing stakeholders. The PM only steps in if things go badly off track.

For larger projects, the PM remains squarely accountable. But the key insight is about leverage: if you are one PM managing 20 engineers, the highest-value use of your time is not shipping the 21st feature yourself. It is getting 5 percent better at guiding the team on what the right opportunities are and upleveling every engineer’s product thinking.

The Coding Flywheel That Changed Everything

Anthropic’s deep bet on coding was not obvious at the time. A document from co-founder Ben Mann, dated 2021, laid out the strategic logic just months after the company was founded. The argument was that investing heavily in AI coding would create a compounding flywheel: better coding models would help Anthropic’s own researchers write code more effectively, which would accelerate model development, which would produce even better coding tools.

This early focus gave Anthropic a structural advantage that competitors are only now trying to replicate. It also explains why the company went so deep on B2B and enterprise use cases rather than chasing consumer attention. The commercial opportunity of coding was large on its own, but the internal research acceleration made it doubly strategic.

Amol notes that this focus was partly born from constraint. Anthropic was the smallest, least well-funded player in the space for years. They did not have Meta’s distribution or Google’s cash flow or OpenAI’s first-mover advantage. That constraint forced extreme focus, which is a principle Amol applies broadly. He calls it “freedom through constraints.”

How Amol Uses AI to Manage His Day

Amol’s personal AI usage is extensive and worth documenting for anyone looking to see how a power user at the frontier actually operates.

Every morning, a scheduled Cowork task reviews 20 to 25 charts across Anthropic’s products and sends him a summary of what needs attention. The false positive and false negative rates are improving week over week, giving him increasing confidence in delegating this monitoring.

He uses Cowork to handle administrative tasks he hates: booking meeting rooms, first-pass email triage, filing expense reports in Brex and reimbursements in Benpass. None of this requires his attention anymore.

For management, he runs weekly Cowork tasks that review what his direct reports have done, cross-reference their work against team OKRs and meeting transcripts, and surface feedback he should give them. He also runs a parallel task for himself, asking Claude to impersonate his manager Ami Vora based on her public writing and internal Slack activity, and deliver feedback from her perspective.

Perhaps most powerfully, he runs a weekly misalignment detection task that scans Slack conversations across the company and surfaces areas where teams may be working at cross purposes. He describes cases where this caught potentially expensive coordination failures before they compounded.

Notebook Channels and the Culture Moat

Anthropic uses “notebook channels” on Slack, which function like internal Twitter feeds where employees share their thinking, priorities, and provocative ideas publicly. Everyone has one, from researchers to growth PMs to Dario Amodei himself. Employees openly disagree with leadership in these channels, and that is encouraged.

These channels serve a dual purpose. First, they help scale cultural values and operating principles as the company grows rapidly. When Amol posts about “the importance of being comfortable leaving money on the table,” every new engineer on the growth team absorbs that principle. Second, and perhaps more importantly for the long term, these channels become structured context that Claude can reference. The HR team has even documented which internal documents Claude should reference for specific topics. Amol sees this as something every company will eventually need to do: share thinking in a structured way so that the AI agents running throughout the organization have the context they need.

AI Safety as Commercial Strategy

Anthropic is structured as a Public Benefit Corporation (PBC), not a standard Delaware C-Corp. This legally allows the company to optimize for public benefit rather than being bound solely to maximize shareholder value.

Amol says the company has repeatedly taken significant commercial hits for safety reasons, including delaying product launches when safety risks were identified. He also makes a striking claim: what Anthropic says publicly about AI risk is actually a softer version of what they believe internally. The internal view on the potential downsides of powerful AI is more aggressive than the public messaging.

From a growth perspective, Amol frames safety as a long-term competitive advantage. Growth teams at most companies try to squeeze every last dollar. Anthropic’s growth team is “very comfortable forgoing metric impact” to protect brand, quality, and safety. He argues this is how all the best products operate, and that as the stakes of AI get higher, Anthropic’s credible commitment to safety will become a moat.

Advice for Thriving in the AI Era

Amol’s advice for product managers and growth practitioners boils down to four points. First, stay on top of the tools. Try every new model release. Something that did not work three months ago may work now, and you will not know unless you go back and test it. Second, go deep on your unique spike rather than trying to be well-rounded. The PM who can also design is a unicorn. The engineer who thinks like a PM is a unicorn. Find your interdisciplinary edge and double down. Third, be radically adaptable. Amol estimates that 50 to 70 percent of how you operated in the past is now irrelevant. Clinging to old playbooks creates friction. Fourth, think in exponentials, not linear projections. If you are looking at the AI landscape through a linear lens, you will consistently underestimate how quickly things are moving.

Thoughts

This interview is one of the most information-dense conversations about growth strategy in AI that has been published so far. A few things stand out.

The CASH system is the most concrete example yet of a company using AI to automate its own growth loop. The fact that it currently performs at a junior PM level is almost beside the point. What matters is the trajectory: it went from impossible to functional in a few months. If models continue improving at their current pace, this system will be operating at a senior PM level within a year. Every growth team at every AI company should be building their own version of this right now.

The PM ratio insight is genuinely surprising and underreported. The default assumption in the tech industry is that AI will reduce headcount across all functions. Amol is making the case that in the near term, the opposite is true for PMs. Engineering output is exploding, and someone needs to direct all that output toward the right problems. That is a fundamentally human, organizational, political job that AI is not close to automating.

The coding flywheel story is also worth highlighting because it shows the power of strategic focus in a world of unlimited possibilities. Anthropic had a generalist technology that could do almost anything, and they deliberately narrowed their focus to one vertical. That decision, made in 2021 before anyone knew what the market would look like, is arguably the single most important strategic bet in the company’s history.

Finally, the notebook channels concept deserves more attention. The idea that employees should share their thinking in structured, searchable formats is not just a culture tool. It is an infrastructure investment for an AI-native future where agents need organizational context to be effective. Companies that build this habit early will have a significant advantage when agent-driven workflows become the norm.

The uncomfortable subtext of this entire conversation is that Anthropic’s growth team, as talented as they clearly are, is riding a wave created almost entirely by the research team. Several YouTube commenters pointed this out, and Amol himself acknowledges it directly. The models are the product. The growth team’s job is to make sure users discover and adopt what the models can do. That is not a small job, especially at this scale, but it is a fundamentally different job than driving growth at a product that does not sell itself.