This is the full episode of Naval Ravikant’s conversation with three frontier founders: Guillermo Rauch of Vercel, Blake Scholl of Boom Supersonic, and Max Hodak of Science. The premise is that all three are building their own factories rather than assembling off-the-shelf parts, so the interesting question is not what they are building but what they are learning about how to build in the age of AI. Over roughly an hour the discussion moves from software factories and the thousand-x engineer into hardware, regulation, healthcare economics, autonomous companies, and a long closing argument about what humans can still uniquely do. Watch the full conversation on the Naval Podcast YouTube channel. We previously published two segments of this same discussion: part one, Waste Tokens to Save Time, on software factories and whether pure software is dead, and part two, Vibe Coding Hardware, on jet engines, vertical integration, and China’s open-source bet. This post covers the entire episode end to end.

TLDW

Four builders argue that AI has turned the engineer’s job from shipping output into building the factory that produces output, which is why token leaderboards are the new vanity metric and why you should waste tokens to save time. Guillermo Rauch frames the thousand-x engineer and the building-block economy, and asks whether pure software is dead now that models speak English. Blake Scholl shows how Boom turned hardware engineering into software, letting two engineers design an entire jet engine and collapsing months of regulatory compliance documentation into minutes. Max Hodak makes the case for extreme vertical integration, a captive MEMS foundry, and a sober counter to Silicon Valley deregulation triumphalism: the bottleneck is the voters and the regulator’s asymmetric incentives, not just bad rules. The group works through healthcare as a fixed-bucket non-market, China’s cost-reduction strategy and its approved implantable brain interface, autonomous software that runs site reliability and security research with thousands of concurrent agents, a company-wide hackathon where the receptionist shipped a real automation, and a long debate on creativity, out-of-distribution surprise, intent, attribution, and the definition of art. The throughline: humans become verifiers, value moves to creativity, taste, and agency, and the single best move is to get extremely good with the tools, because it is people with AI versus people without AI.

Thoughts

The strongest idea in the episode is the quiet redefinition of what an engineer is for. Rauch’s point is that you no longer judge a person by how well they ship a single output. You judge them by whether they can build the factory that produces outputs B through Z. That reframe instantly explains why token leaderboards are nonsense. Counting tokens consumed is the same category error as counting lines of code written, a measure of motion mistaken for a measure of progress. Naval’s “waste tokens, save time” is the correct response: tokens are cheaper than people, so optimize for your own wall-clock time and the final output, and throw three models at the same problem if that gets you unstuck faster. The uncomfortable corollary, which the group says out loud, is that leverage in idea domains was never linear. The hundred-x and thousand-x engineer is not a new phenomenon. AI just made it impossible to keep pretending otherwise.

The second thread that ties the whole hour together is verification. Everyone converges on the same future: humans stop producing the work directly and move up the stack to signing off on it. Rauch is precise about what that means. Saying “I understand this pull request” no longer requires reading every line. It requires being able to say you wrote the test harness, the proofs, the type checkers, and the simulations that let you stand behind it in production. That is a profound shift, because it accepts that the code may be spaghetti you do not fully understand while insisting that the evaluator around it is trustworthy. Blake extends the same logic to regulation, and this is the most underrated argument in the episode. If you treat a 200-page lightning-strike compliance document as a test suite and a regulation as an exit criterion for an agent loop, then a body of rules you once resented becomes a guard rail that lets you move faster, not slower. The cost of change collapses, change aversion drops, and you can finally afford to iterate on physical things.

Max Hodak is the adult in the room on regulation, and the episode is better for it. The Silicon Valley consensus is that regulation is simply friction to be deleted, and there is plenty of dysfunction to point at: the NRC permitting essentially zero nuclear plants for decades, the FDA’s asymmetric incentives where approving a bad drug ends a career but blocking a good one costs nothing visible. But Hodak keeps pulling the conversation back to the harder truth. This is where the voters are. If you removed the current regulatory package, something very similar would get voted right back in, because the asymmetry reflects how the public actually weighs a visible death against an invisible delay. Real reform is not “deregulate,” it is narrow and surgical: prohibit the FDA from drawing adverse inferences across different users of a compound, build innovation zones where people consent to different rules, or copy Europe’s notified-body model so review capacity can actually scale. That is a far more serious position than the usual abundance-or-bust framing.

The healthcare segment is the part of this conversation you will not find in the two clips, and it is the most heterodox. Hodak’s diagnosis is that healthcare is a fixed bucket of money that grows with tax receipts, not a technological growth industry where falling prices expand the market the way phones and laptops did. Because there is no real private market, you get a small communist society running inside a larger capitalist one, with the waiting lines and frozen product quality that implies. His prescription is not single payer and not insurance reform. It is to drive the cost of bringing devices and drugs to market so low that a patient can buy a restored sense or an extra decade of life on a credit card, the way they finance a car, and his warning is that China’s lower approval costs and its already-approved implantable brain interface put it on track to do exactly that. Whether or not you buy the twenty-percent-of-income deductible he floats, the framing that a private market is the missing feedback loop is the kind of argument that gets too little airtime.

The closing debate on creativity is where the four of them disagree most productively, and they are careful enough to notice that their conclusions follow from their definitions. Hodak defines art as meaningful out-of-distribution behavior, which lets a military maneuver or a math proof count, and leads him to think a sufficiently capable model gets there too. Naval defines art as conveying an emotion with intent, which makes attribution load-bearing: the same photo down to the last pixel means more when a human took it, and a startup doing hardware attestation of human authorship suddenly has a real market. The shared observation that should worry every builder is that AI output collapses to a distribution mean. Every Claude-built website ends up the same serif font, the same brown and cream, the same monospace spacing, recognizable as slop precisely because it is in-distribution. The optimistic read, and the one Naval lands the episode on, is that this leaves an enormous and durable lane for humans who can step outside the system, and that the practical move for everyone is simply to become excellent with the tools, because the real divide is people with AI versus people without.

Key Takeaways

- The job of an engineer has shifted from shipping a single output to building the factory that produces multiplicative outputs, so people are now judged on the leverage they create rather than the work they personally do.

- There were always 10x engineers, and in idea, intellectual, and digital domains the real spread is 100x or 1000x. AI leverage just made that gap impossible to deny.

- Token leaderboards and token consumption are the new lines-of-code: a measure of activity that does not map to value. Measure your own time and the final output instead.

- Waste tokens to save time. Models are still far cheaper than a human, so throwing Codex, Claude, and Gemini at the same problem repeatedly is rational even when it looks wasteful.

- Low-quality first-pass code is fine because you can spend more tokens later to harden it for production. The constraint is verifiable domains, not code quality.

- A model is roughly as good as you are in a domain. The quality of your prompting and reprompting strongly determines the output, though this dependence should fade as models improve.

- Models graduated from junior to principal engineers: they now return with multiple routes and tradeoffs rather than running away with the first idea, even if their time and cost estimates are often wrong.

- A junior gets knowledge they could never have produced alone, but an experienced architect still extracts far more juice. Taste and judgment, like picking Postgres versus ClickHouse, remain the human’s edge.

- Pure software’s moat is in question now that models speak fuzzy, sloppy English. For hardware founders this is a boon, since good software finally becomes cheap to produce.

- The building-block economy, from Mitchell Hashimoto, argues agents need powerful reusable infrastructure rather than reinventing queues and databases every time. Shared dependencies are a cooperation value, like everyone depending on the same Postgres version.

- Naval and Max both stopped writing code for years, then started building software they use daily through agents, on the strength of understanding how the pieces fit rather than syntax.

- With agents you stop getting stuck on narrow debugging problems that used to consume indefinite time. The intrinsic frustration that was once “how you learn” is largely gone.

- Boom turned siloed hardware engineering, much of it trapped in Excel and VBScript with no source control, into real software with automated testing and repeatable flows.

- Software engineers now build the architectures and hardware engineers vibe code their pieces, letting two engineers design an entire jet engine where a single turbine-blade analysis once took one engineer a full day across a thousand blades.

- Enterprise collaboration software and even spreadsheets are getting cooked, because you can now code the exact custom tool you need instead of approximating it.

- AI will soon generate step files and PCB layouts, bringing the current software boom to mechanical and electrical engineering, likely within the year.

- China is betting on open-source models because its hardware and supply-chain superiority pairs with on-demand software generation to erase Silicon Valley’s software advantage. Fall behind on generating software and you fall behind on generating everything.

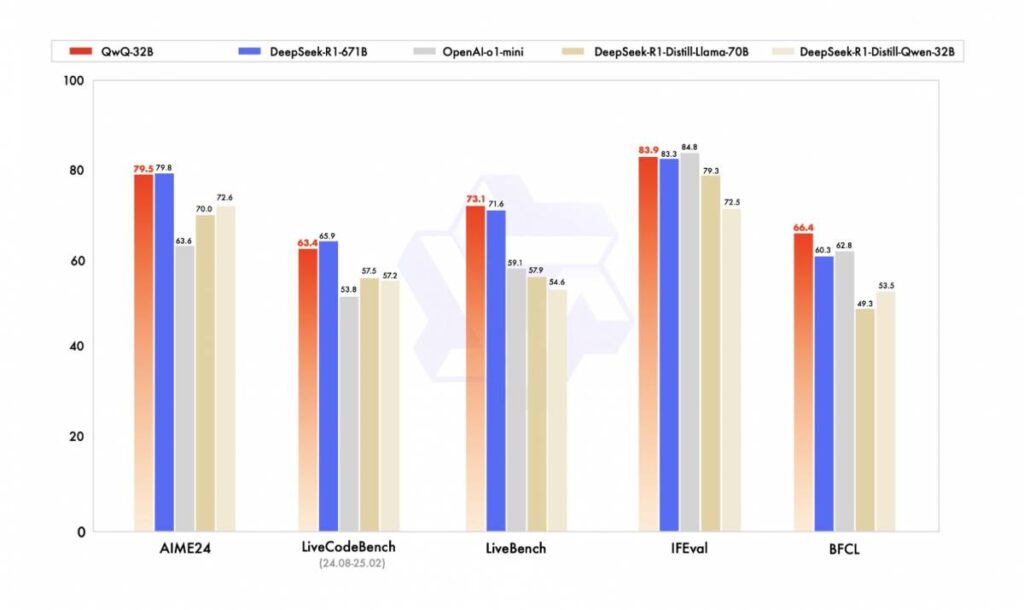

- In real usage, frontier intelligence dominates the top. Gemini “slaps at scale” as an industrial production model for support and browser automation, while Chinese models are not in the frontier coding tier.

- Intelligence is an unalloyed good. Because mistakes are invisible and models are cheaper than people, you reach for the smartest available model rather than running a weaker one many times.

- Max’s vertical integration thesis: when you cannot buy a part, you make it. Science owns a captive MEMS foundry because tighter integration toward a single block of bonded matter yields lower power, smaller size, and longer life.

- AI’s biggest near-term impact inside hardware companies is regulatory: generating documentation and tracing which of thousands of ISO standards apply, work that used to occupy a quality team for months.

- Junior engineers got promoted to senior and junior engineering got handed to agents. The same pattern hits law, where basic NDAs and red lines no longer require a lawyer.

- Humans are becoming verifiers. Signing off on a PR means standing behind its consequences via tests, proofs, and type checkers, not reading every line. Creating software is easy; keeping it secure, tested, and maintained 1000 days out is the real question.

- A RAG over regulatory documents collapses a 200-page compliance test plan from months to minutes, which cuts change aversion: you can alter the airplane and regenerate compliance instead of crying over rework.

- Regulations can act as a test suite and exit criteria for agent loops, as long as they are non-contradictory and reasonable. The alternative is shipping slop directly into the air.

- Physical building is guilty until proven innocent, illustrated by the absurdity of pre-filing a driving plan before every trip. The fix is more enforcement-based regulation rather than pre-approval, though agents on both sides could trigger a red queen race and DDoS overwhelmed agencies.

- Regulation often fails to make things safer, only slower: the 737 Max shipped a single sensor with full authority over pitch, and the NRC kept us perfectly safe by approving almost no nuclear plants for decades.

- The deeper problem is the voters and the regulator’s asymmetric incentives. Approve a bad thing and your career ends; block a good thing and nobody notices. Removing one agency just elects its replacement.

- Targeted fixes beat blanket deregulation: bar adverse inferences across users of a compound, use single-patient IND pathways, create opt-in innovation and YIMBY zones, or adopt Europe’s competitive notified-body reviewers.

- Healthcare is a fixed bucket of money tied to tax receipts, not a growth industry, so spending 10x more on it would be a catastrophe rather than a triumph. With no private market you run a small communist society inside a capitalist one.

- The escape is lower cost-to-market, not single payer, so people can finance care like a car. China’s lower approval costs and its already-approved implantable BCI point that direction. LASIK, dental, and plastic surgery advance because patients pay directly.

- End-of-one medicine works at the high end, as with GitLab’s Sid Sijbrandij outliving his cancer prognosis through a self-built escalation ladder, but it demands enormous agency at the patient’s weakest moment. AI should democratize that knowledge.

- Vercel automated much of site reliability engineering: anomalies fire alerts, an agent investigates, can open an incident, and begins remediation, stopping just short of changing production itself.

- Running an open-sourced security tool against the whole monorepo with 10,000 concurrent agents produced several quarters of security research in a couple of days for about $14,000 in tokens. Code translation and optimization are similarly autonomous now.

- Blake stopped all project work for a week and had everyone, receptionist to engineers, build something with AI and demo it. He expected mostly silly projects and got mostly needle movers, including a real automation from shipping and receiving.

- The autonomous company of the future may have a workforce that trains the agents doing the work rather than doing it directly, with tooling that extracts reusable skills from your inputs and outputs.

- Returns are shifting from intelligence toward agency for humans, since agents supply the intelligence. The people best fit for the future open a coding agent and ask what to build instead of defaulting to passive consumption.

- Maybe 10x more people are coding than a year ago, yet around 99% still never will, because to a non-coder the starting step remains unimaginable. Vibe coding is described as more addictive and entertaining than video games, with real output.

- AI video lacks taste and judgment for now, but by 2030 expect fan-made films: dozens of Lord of the Rings takes, or generating unmade seasons of The Expanse from the books. The bigger prize is a genuinely new imaginative work, not a remix.

- What humans uniquely do is generate meaningful surprise out of the training distribution, with intent that makes it mean something. Gödel stepping outside the formal system is the archetype; Claude’s identical-looking websites are the counterexample of in-distribution slop.

- Higher productivity historically means you hire more, not fewer, of the productive people. Expect a larger number of smaller teams, an entrepreneurship explosion, and generalists winning as credentials matter less than creativity, taste, and judgment.

- The throughline is people with AI versus people without AI. The single best investment right now is getting genuinely good with the tools and learning the exact edges of what they can and cannot do.

Detailed Summary

Software Factories and the Thousand-X Engineer

Guillermo Rauch opens with the idea that has him “pilled”: the engineer’s job has changed from shipping output directly to building the factory that produces multiplicative outputs. That reframes how you evaluate people and surfaces an old, controversial truth. He used to get flamed on Twitter for asserting 10x engineers, since it offends an equality instinct, but in intellectual and digital domains the real spread is 100x or 1000x, and choosing the right thing to work on is an infinite multiplier on top. AI leverage makes this less controversial, except that people now confuse token spend for productivity. The group agrees token leaderboards are the new lines-of-code. Max Hodak adds that a model is about as good as you are in a domain, so a capable developer gets a powerful collaborator while a junior gets junior-grade help, and the sporadic feedback you give, the reprompting, disproportionately determines the result. Naval’s posture is the opposite of fussy: he ignored every prompt-engineering trick on the bet that the models would improve faster than he could learn to game them, types less and less, and brute-forces problems by throwing multiple models at them. Waste tokens, save time, because tokens are cheaper than people.

Is Pure Software Dead, and the Building-Block Economy

Rauch describes models crossing from junior to principal engineer: they now return with several routes and explicit tradeoffs, push back when you try to jam high-cardinality telemetry into Postgres, and suggest ClickHouse or Athena instead. That elevates taste and judgment as the human contribution. He then poses the hard question: is pure software engineering obsolete now that models speak fuzzy, sloppy English and you no longer need code to communicate with them? For hardware founders it is a boon, echoing Patrick Collison’s line that software is art and artists are hard to hire. To temper the “agents reinvent everything” fantasy, he invokes Mitchell Hashimoto’s building-block economy: you do not want your agent rebuilding a queue from first principles every time it sends an email, and shared dependencies like a common Postgres version carry real cooperation value. Reusable infrastructure becomes more valuable in the agentic era, functioning like libraries and dependencies, or even a token cache, so models fork from existing starting points instead of burning a trillion tokens to recreate what exists. Naval and Max both note they had not written code in years and now build daily through agents, because understanding how APIs, data flow, and performance fit together matters more than syntax, and vibe coding is just transmitting intent the way a good engineering leader already did through people.

Vibe Coding Hardware at Boom Supersonic

Blake Scholl explains how AI changed the role of software and hardware developers at Boom. A great deal of hardware engineering lives in complex Excel spreadsheets and VBScript on individual laptops, with no source control and no automated testing, and handoffs happen manually over email like it is the 1990s. Boom had long tried to turn these flows into real software but could never afford enough software engineers. The new model is that software engineers create the architectures, because they understand systems, algorithms, and separation of concerns, and hardware engineers vibe code their own pieces. The result is mind-blowing productivity for small teams. His example: a turbine blade is cold at rest and expands when hot, so you must design both the cold and hot shapes and convert between structures and aerodynamics, work that took one engineer a full day per blade across a thousand blades in a jet. With a combined software-and-hardware tool you can now change blade geometry and see structural and aerodynamic results in real time, letting two engineers design an entire jet engine. The group extends this to the death of enterprise collaboration software and even spreadsheets, since you can now code the exact custom tool you need, and predicts AI will soon generate step files and PCB layouts, carrying the boom into mechanical and electrical engineering.

China, Open Source, and Which Models Actually Get Used

Naval argues China is going all-in on open-source models because its hardware and supply-chain superiority pairs naturally with on-demand software generation, which erases Silicon Valley’s software edge, and because the Chinese government has a history of funding ecosystem-wide efforts in network-effect businesses. Without frontier coding models there is no self-improvement, so a country that cannot generate frontier software falls behind on generating everything downstream. He notes the irony that almost all the open-source heft now comes from China, since OpenAI is not open, Grok and Google’s local models trail, and Anthropic ships no open models. On real usage, Rauch reports from Vercel’s AI gateway that frontier intelligence dominates the top, with a caveat: frontier intelligence at the right cost and performance, like Gemini, slaps at scale and is the best industrial production model for support and browser automation, while Chinese models are not in the frontier coding tier. Naval frames intelligence as an unalloyed good, since model mistakes are invisible and a smarter model is still cheaper than a person, which pushes everyone toward the most intelligent option and risks an oligopoly in AI.

Vertical Integration, Verifiers, and the Slop Problem

Max Hodak lays out Science’s vertical integration: the preference is always to buy, as with cheap PCBs from Asia, but when components do not exist you must make them, and the closer a product gets to a single block of covalently bonded matter the better it performs. Science owns a captive MEMS foundry on the east coast because there was no other way to do the packaging and assembly it needed. He notes AI’s most surprising internal impact so far is regulatory: generating documentation and tracing which of thousands of ISO standards apply, work that once tied up a quality team for months. Rauch raises the slop problem: mountains of AI-generated code arriving as pull requests nobody can read line by line. His standard is that an engineer must be able to say they understand and will stand behind the consequences of a PR, backed by the test harness, proofs, and type checkers, even without reading it all. Naval generalizes this into humans becoming verifiers, with lawyers, engineers, and operators moving to verifying the stack and standing behind it, and Rauch warns that creating software is the easy zero-to-one part while keeping it secure, tested, performant, and maintained a thousand days later is the real test.

Regulation as Test Suite, and the Voter Problem

Blake describes building a RAG that compresses a 200-page lightning-strike compliance test plan from months of a “monkey at keyboard” engineer’s work into minutes, with a powerful second-order effect: change the airplane and you regenerate compliance in minutes instead of crying over months of rework, which slashes change aversion and lets a small number of creative engineers iterate. Max reframes regulations as potentially good guard rails, a test suite and exit criteria for agent loops, provided they are non-contradictory and reasonable, since the alternative is shipping slop into the air. Naval warns of a red queen race of agent-on-agent compliance and agencies getting DDoSed by clever entrepreneurs flooding them with documents. Blake pushes for enforcement-based rather than pre-approval regulation, using the analogy that we would never tolerate filing a driving plan before every trip, yet that is exactly how physical infrastructure works: guilty until proven innocent. He cites the 737 Max’s single all-authority sensor and the NRC permitting almost no nuclear plants for decades as proof that this makes us slower, not safer. Hodak supplies the counterweight: the deeper issue is the voters and the regulator’s asymmetric incentives, where approving a bad thing ends a career and blocking a good thing goes unnoticed. Remove an agency and the electorate installs its twin. Naval and Max agree the real reforms are narrow, including innovation zones, opt-in YIMBY zones, and the experimental laboratory of fifty states.

Drug Discovery, Healthcare Economics, and End-of-One Medicine

Hodak explains why innovation zones do not solve drug discovery. The right-to-try act and single-patient IND already exist, and the FDA approves over 99% of such requests, sometimes by phone, but dosing requires clinical-grade drug that only the IP owner has, and the FDA will draw an adverse inference against the whole program if a very sick patient does worse. A targeted fix is to prohibit adverse inferences across different users of a compound. He points to Europe’s notified-body system, private certifiers blessed by governments, as a way to scale review capacity, and to China’s CFDA, which already approved an implantable brain-computer interface and brings products to market far cheaper. His core economic argument is that healthcare is a fixed bucket of money that grows only with tax receipts, unlike phones and laptops where falling prices expanded the market, so spending 10x more on healthcare would be a catastrophe rather than the triumph that 10x AI spending would be. With no private market you run a small communist society inside a capitalist one, with the lines and frozen quality that implies. The way out is lower cost-to-market so patients can finance care like a car, which is the direction China is pushing. Naval’s twist is a healthcare plan where the first 20% of income is the deductible to recreate a private market, citing LASIK, dental, and plastic surgery as fields that advance because patients pay directly. The group closes the segment on GitLab’s Sid Sijbrandij, who outlived a rare-cancer prognosis by building his own escalation ladder of drugs, noting that end-of-one medicine works at the high end but demands enormous agency exactly when a patient is weakest, which is where AI should democratize access to knowledge.

Autonomous Software, Hackathons, and the Autonomous Company

Asked how much autonomous software they run, Rauch describes Vercel automating much of site reliability engineering: instead of hand-set alarm thresholds, anomalies in error rate, latency, or throughput fire an alert, an agent investigates, can open an incident that loops in people, and begins remediation, stopping just short of changing production. Vercel also runs autonomous optimization and security research, and an open-sourced security tool run against the entire monorepo with 10,000 concurrent agents produced several quarters of security research in a couple of days for about $14,000 in tokens, the equivalent of months of red teaming. Max shares a vibe-coded bug-reporting queue where TestFlight users submit logs and screenshots, a daemon analyzes and fixes issues in the background, and ships him a build to try, raising the prospect of apps effectively built by their users, with the caveat that you would get a Homer Simpson car of every feature. Blake recounts stopping all project work for a week and requiring everyone, from the receptionist to the engineers, to build something with AI and demo it. He expected mostly silly projects and got mostly needle movers, including a genuinely useful automation from the shipping and receiving associate, concluding that most people have an idea worth building but cannot tell a good first idea from a bad one until they can iterate on a real thing. Rauch extends this to a workforce that trains the agents doing the work rather than doing it directly, and a coming feature to extract reusable skills from your inputs and outputs.

Creativity, Out-of-Distribution Surprise, and What Humans Can Uniquely Do

On the intelligence-versus-agency split, Max suggests returns to humans tilt toward agency since agents supply intelligence, while Naval counters that you stay 99% intelligence and 1% agency because the agents exercise the agency for you. They agree the humans best suited to the future are the agentic ones who open a coding agent and ask what to build. Coding has perhaps 10x more participants than a year ago, yet roughly 99% still never will, because the first step is unimaginable to a non-coder, even as vibe coding proves more addictive and entertaining than video games while producing something real. On AI video, the group notes it still lacks taste and judgment, but expects fan-made films by 2030, dozens of Lord of the Rings takes or generated seasons of The Expanse, while prizing a genuinely new imaginative work over a remix. The long closing debate turns on definitions. Hodak defines art as meaningful out-of-distribution behavior, broad enough to include a military maneuver, and expects models to reach it. Naval defines art as conveying emotion with intent, which makes attribution decisive: the same photo means more taken by a human, and a hardware-attestation startup gains a real use case. They cite Gödel stepping outside the formal system as the human archetype and the identical look of every Claude-built website as in-distribution slop. Naval lands the episode on optimism: productivity gains mean hiring more, not fewer, of the creative and AI-fluent, the future is a larger number of smaller teams and an entrepreneurship explosion where generalists thrive and credentials fade, and the single best move is to get extremely good with the tools, because it is people with AI versus people without AI.

Notable Quotes

“Now clearly there’s 100x or a thousandx engineers and the world hasn’t fully adjusted to this.”

Guillermo Rauch, on why AI made the spread between engineers impossible to ignore

“Just waste tokens, save time. Don’t look at the tokens either as inputs or outputs. Just look at your time and look at the final output.”

Naval Ravikant, on the right way to measure AI’s return

“We had to learn code to communicate with the models. Now the models speak English and they speak fuzzy sloppy English like a human and they understand things.”

Guillermo Rauch, asking whether pure software engineering is now obsolete

“It allows two engineers to design an entire jet engine, which is just wildly different.”

Blake Scholl, on Boom turning hardware engineering into software

“You need to be able to say I am signing off on understanding the consequences of this PR.”

Guillermo Rauch, on what it means to stand behind code you did not read line by line

“That is absolutely the way we build physical infrastructure in this country. It’s guilty until proven innocent. And what we should actually do is make more of these things enforcement based rather than pre-approval based.”

Blake Scholl, comparing the permitting process to filing a driving plan before every trip

“You’re basically running a small communist society inside a larger capitalist society. And that’s what we’re doing in healthcare.”

Max Hodak, on why there is no real private market in healthcare

“I expected we would get a large number of silly projects and a small number of needle movers. And what we got was a large number of needle movers and a very small number of silly projects.”

Blake Scholl, on the week he had the whole company build with AI

“If a person takes the photo versus AI generates the exact same photo down to the last pixel, the person taking the photo will have more meaning for me.”

Naval Ravikant, on why intent and attribution make something art

“It’s about people with AI versus people without AI. And so the single best thing you can be doing right now for yourself is just getting really good with these tools.”

Naval Ravikant, closing the conversation on the only divide that matters

Watch the full conversation here: The AI Industrial Revolution on the Naval Podcast YouTube channel.

Related Reading

- Part one: Waste Tokens to Save Time, our writeup of the first segment, on software factories, the thousand-x engineer, token leaderboards, and whether pure software is dead.

- Part two: Vibe Coding Hardware, our writeup of the second segment, on AI-designed jet engines, vertical integration, China’s open-source bet, and humans as verifiers.

- Naval Ravikant’s official site, the canonical home for Naval’s essays and podcast on technology, judgment, and leverage.

- Boom Supersonic, Blake Scholl’s company building supersonic aircraft and its own jet engines, source of the turbine-blade and two-engineers example.

- Science Corporation, Max Hodak’s brain-computer interface company, whose captive MEMS foundry and FDA arguments anchor the hardware and healthcare segments.

- Vercel, Guillermo Rauch’s company, whose AI gateway data and autonomous SRE work inform the usage and automation discussion.