Andrej Karpathy Autoresearch is the breakout open-source project of March 2026. Released just days ago, this ~630-line minimalist framework lets AI coding agents (Claude, GPT-4o, Gemini, etc.) autonomously run real LLM pretraining research experiments on a single GPU while you sleep. It’s already producing real improvements that transfer to bigger models and hitting new leaderboard entries.

Read Karpathy’s original announcement post on X here (23k+ likes, 7M+ views).

If you’re searching for the best “Karpathy Autoresearch tutorial”, “how to setup autoresearch”, or “AI agents LLM experiments overnight”, this is the most detailed, up-to-date guide on the internet.

TL;DR – Karpathy Autoresearch in 60 Seconds

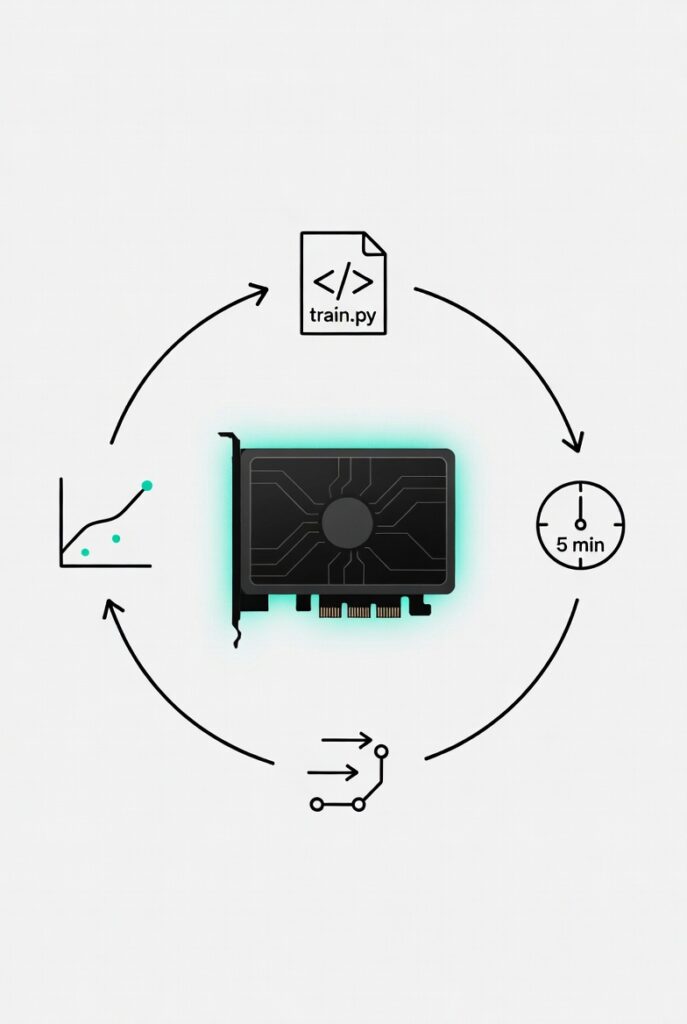

Karpathy Autoresearch is a single-GPU agent-driven system where:

- You write the high-level research goal in program.md

- The AI agent only edits train.py

- Every experiment runs for exactly 5 wall-clock minutes

- Better val_bpb score? Keep the git commit. Worse? Auto-revert

- ~100 experiments per night → real breakthroughs while you sleep

Official repo: github.com/karpathy/autoresearch. Works today on NVIDIA, Mac, and Windows.

What Is Andrej Karpathy Autoresearch and Why Everyone Is Talking About It

In his viral X post Karpathy wrote: “One day, frontier AI research used to be done by meat computers… That era is long gone.”

Autoresearch takes his famous nanochat training core, strips it to a single file, and hands the entire research loop to AI agents. The human only updates the strategy in program.md. The agent experiments with architecture, optimizers, attention variants, RoPE, batch sizes — everything — and keeps only improvements via git.

Real results: Improvements discovered on depth-12 models already transfer to depth-24 and are landing nanochat a new “time to GPT-2” leaderboard spot after ~650 experiments.

How Autoresearch Actually Works (Step-by-Step)

The system is deliberately minimal so agents can understand and modify it instantly:

- prepare.py (DO NOT TOUCH) – dataset + BPE tokenizer.

- program.md – your research instructions and constraints.

- train.py – full GPT model + Muon+AdamW optimizer + training loop. This is the ONLY file the agent edits.

Agent loop (runs forever): read program.md → edit & commit → train 5 minutes → measure val_bpb → keep or revert. ~12 experiments per hour.

Karpathy Autoresearch Setup Guides – NVIDIA, Mac & Windows

1. Official NVIDIA Setup (5 Minutes)

curl -LsSf https://astral.sh/uv/install.sh | sh

git clone https://github.com/karpathy/autoresearch.git

cd autoresearch

uv sync

uv run prepare.py

uv run train.pyThen paste the repo into Claude/GPT-4o and say: “Have a look at program.md and let’s kick off a new experiment!”

2. MacOS / Apple Silicon (MLX Fork)

github.com/trevin-creator/autoresearch-mlx – same commands, optimized for M-series chips.

3. Windows RTX Fork

github.com/jsegov/autoresearch-win-rtx – full PowerShell compatibility.

4. Best Community Getting Started Guides

Key Takeaways – What You Need to Remember

- Only ~630 lines of code and runs on one GPU

- Humans edit only program.md; agents do everything else

- Fixed 5-minute runs = fair comparisons

- val_bpb metric is reliable and vocab-size independent

- Real transferable gains proven (depth-12 → depth-24)

- Mac, Windows, and NVIDIA versions all live today

- Community already reporting 19%+ overnight gains

- This is the seed of swarm-style AI research labs

Detailed Summary of Andrej Karpathy Autoresearch

Released March 7-8 2026, Autoresearch is the minimal viable version of fully autonomous LLM research. Human strategy → AI agent execution → git-tracked progress. The entire system is designed to be forked and scaled into a massively collaborative platform where thousands of agents contribute “tiny papers” across branches.

Karpathy’s Vision: From Solo Agents to Swarm Research

In his follow-up X post Karpathy explained the bigger idea: asynchronously massively collaborative agent research (SETI@home style). The current code is just the seed — the real future is agents forking, discussing via GitHub, and accumulating discoveries across thousands of branches.

Read the full vision post on X here.

Final Thoughts on Karpathy Autoresearch

From the explosive announcement post to Mac/Windows forks appearing in 48 hours and real leaderboard improvements already confirmed, this project feels like the moment everything changed. Anyone with a GPU now has a full AI research team working overnight for almost zero cost. The human’s only job is writing the research strategy prompt. The era of manual experimentation is ending — and it’s ending fast. Download the repo tonight, link Karpathy’s original X posts for context, write your first program.md, and wake up to new discoveries.

Official Karpathy X Posts & Resources

- Official Autoresearch GitHub Repository

- Karpathy’s Original Announcement Post on X

- Karpathy’s Vision for Massively Collaborative AI Research on X

- Latest Update: Improvements Transfer to Depth-24 & New Leaderboard Entry

Ready to Start? Clone the repo, run the three commands, and let your agents take over. Bookmark this page — new forks and agent-generated papers will drop daily. Drop your overnight results in the comments!