On May 15, 2026, xAI shipped a major update to the open-source release of the X “For You” recommendation algorithm. The repository now includes a runnable end-to-end inference pipeline, a pre-trained mini Phoenix transformer, a brand-new content-understanding service called Grox, and ad-blending logic. This is the most transparent look at how a major social feed actually picks your posts that has ever been published.

This is the practical, plain-english guide. We read the source. Here is exactly how a post travels from your fingertips to someone’s For You tab, and what you can do to be the post that wins.

The whole strategy in one sentence

Write posts people reply to, repost, DM to a friend, linger on, and follow you for. Avoid anything that earns a mute, block, report, or spam flag. Space your posts hours apart. That is the algorithm.

TL;DR

- The For You feed is no longer a stack of heuristics. It is a single transformer-based machine learning system that predicts the probability you will like, reply, repost, share, dwell on, or hide a given post.

- Posts come from two pools: Thunder (people you follow) and Phoenix Retrieval (the rest of X, found by similarity search).

- A model called Phoenix scores every candidate against your engagement history. The final score is a weighted sum of nineteen predicted actions, with negative weights for “block”, “mute”, and “report.”

- Almost no hand-engineered features survive. Freshness, verification badges, follower counts, and post type are not directly boosted. They are signals the transformer learns to use from your behaviour.

- Out-of-network content is penalised by a tunable factor, so in-network posts have an edge by default.

- A separate service called Grox continuously classifies posts for spam, policy violations, and brand safety. Flagged content gets filtered or de-amplified before it reaches scoring.

- The best optimisations are still the boring ones: write posts that earn long dwell time, replies, reposts, and follows, and avoid anything that triggers mutes or reports.

What changed on May 15, 2026

The January 2026 release gave us the architecture but not a working system. The May update is the one that matters:

phoenix/run_pipeline.pyreplaces the separate retrieval and ranking scripts with a single inference entry point that mirrors production.- A pre-trained mini Phoenix model (256-dim embeddings, 4 attention heads, 2 transformer layers) is bundled as a roughly 3 GB Git LFS archive. You can run inference without training.

- The Grox content-understanding service is now public. It runs classifiers and embedders for spam detection, post categorisation, and policy enforcement.

- Ads blending is now in the open. So is brand-safety tracking.

- New query hydrators mean the model sees your followed topics, starter packs, served history, impression bloom filters, IP, and mutual-follow graph at request time.

- New candidate hydrators add engagement counts, language codes, media detection, quote post expansion, and mutual follow scores.

- New candidate sources for ads, who-to-follow, Phoenix Mixture-of-Experts, Phoenix Topics, and prompts.

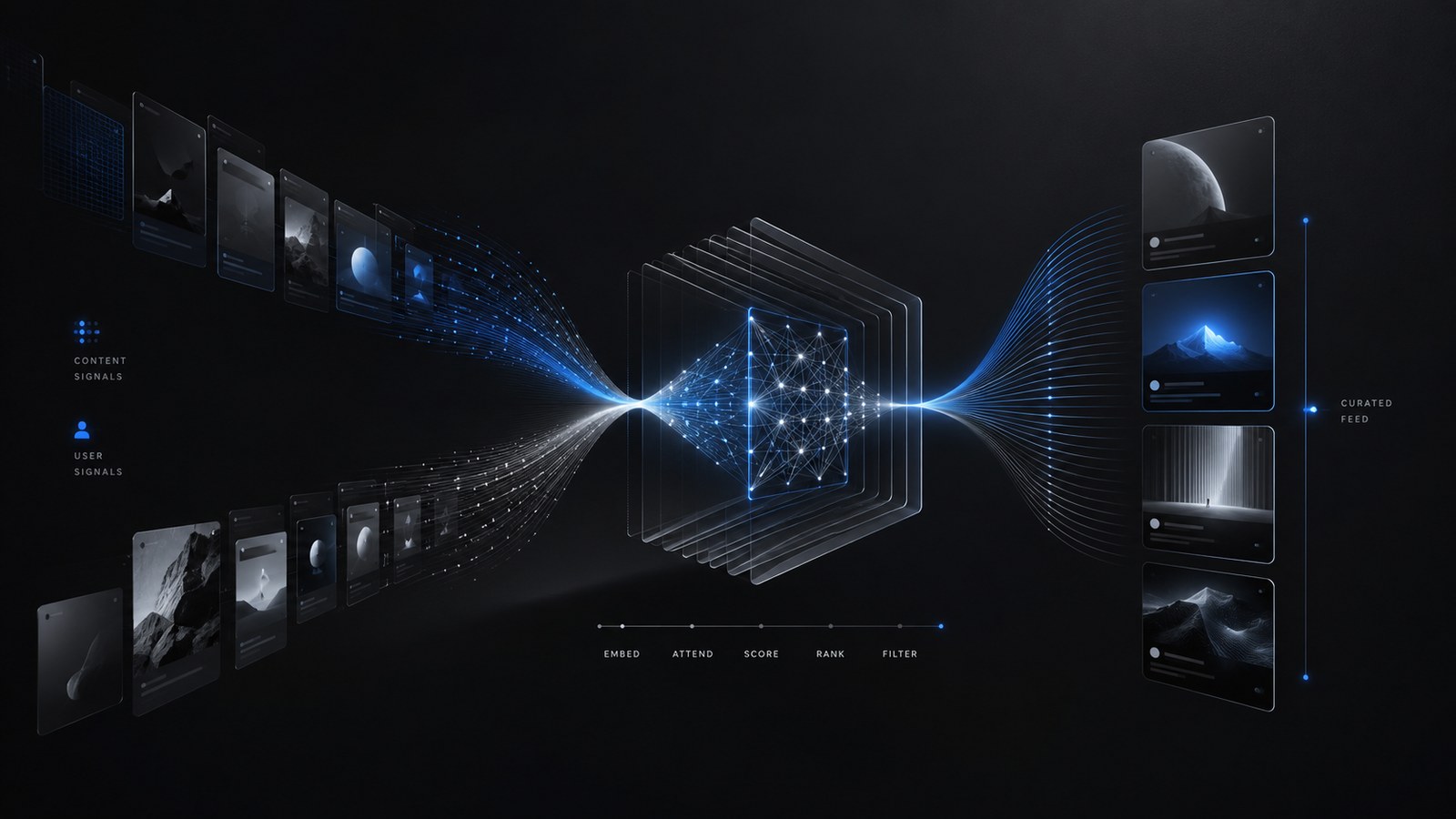

The For You pipeline in one picture

Every time you pull to refresh, the same pipeline runs:

- Query hydration: pull the requesting user’s engagement history, follow list, topics, served history, and metadata.

- Candidate sourcing: gather candidates from Thunder and Phoenix Retrieval in parallel.

- Candidate hydration: enrich each candidate with text, media, author, engagement counts, brand-safety labels, language, mutual follow scores.

- Pre-scoring filters: drop duplicates, posts that are too old, your own posts, blocked or muted authors, posts you’ve already seen, and posts with your muted keywords.

- Scoring: run candidates through Phoenix, combine the predicted action probabilities into a weighted score, attenuate repeated authors, penalise out-of-network content.

- Selection: sort by score, take the top K.

- Post-selection filtering: final visibility check for deleted, spam, violence, gore, abuse, and de-duplication of conversation branches.

- Side effects: cache request info, then return the ranked feed.

That is the whole story. Every choice the system makes lives in one of those stages.

Where candidates come from: Thunder vs Phoenix

Two sources feed the pipeline.

Thunder is the in-network store. It is an in-memory firehose that consumes post create and delete events from Kafka and serves sub-millisecond lookups for recent posts from people you follow. Posts older than the retention window get trimmed automatically. Thunder is why the feed feels fast.

Phoenix Retrieval is the out-of-network source. It is a classic two-tower neural network. A user tower encodes your features and recent engagement history into a single embedding. A candidate tower does the same for every post in the global corpus. The system then does an approximate nearest-neighbour search over those embeddings to find the posts whose vectors point most similarly to yours. A dot product between vectors is all it takes.

How many candidates from each side? The code does not hard-code a ratio. It is set at runtime via parameters (ThunderMaxResults and PhoenixMaxResults). New users get a different retrieval cluster while their account is below an age threshold and a minimum-following count.

The creator implication is the part most guides miss: follower count is not what gets you into out-of-network feeds. Embedding similarity is. Phoenix knows nothing about how famous you are. It knows that the people who engage with posts like yours have engagement histories that look like the histories of users it is trying to serve.

How Phoenix ranks posts

After candidates arrive, every one of them gets a score from the Phoenix ranking transformer. The architecture, per phoenix/README.md, is small by language-model standards:

- 128-dimensional embeddings

- 4 transformer layers

- 4 attention heads

- 127-position user history sequence

- 64-position candidate sequence

- 1,000,000 entries each in the user, item, and author vocabularies (with 2 hash functions per entity)

- 19 predicted action types

The transformer’s input is a sequence of your past engagements. Each engagement carries the post you engaged with, the author, the action you took, and the product surface (For You, profile, search). The candidates are appended as a second segment. The model uses candidate isolation masking: candidates can attend to your history but not to each other. This is a deliberate engineering choice. It means a post’s score does not depend on the other posts in the batch, which keeps scoring cacheable and consistent.

The output is one probability per action type, per candidate.

The action weights: what positive and negative engagement is worth

The Weighted Scorer combines those probabilities into a single number:

“ Final Score = Σ (weight_i × P(action_i)) “

The exact weight values are not in the open-source repo. They live in an external configuration crate (xai_home_mixer) that xAI tunes continuously. What the repo does show us is the shape of the signal, and that is what matters for strategy.

Positive weights are applied to these predicted actions:

- favorite

- reply

- retweet

- quote

- quoted click

- click

- profile click

- photo expand

- video view (only counted if the video is above a minimum duration)

- share

- share via DM

- share via copy link

- dwell (the user lingered on the post)

- continuous dwell time (how long they lingered)

- follow author

Negative weights are applied to:

- not interested

- block author

- mute author

- report

A few observations matter for creators. Replies, reposts, follows, and DM shares are listed as their own separately-weighted actions, which is the strongest signal we have that xAI treats them as more valuable than a like. Dwell and continuous dwell time are split into two predictions, which means how long the average person reads your post is its own ranking lever. And the negative actions are not just filters. They actively push the score down for posts that even slightly resemble content that triggers mutes or reports.

The out-of-network penalty

Out-of-network content does not start on an even footing with in-network content. The OON Scorer multiplies a candidate’s score by a configurable factor that is less than one when the candidate came from Phoenix Retrieval. This is why following the right accounts still matters.

There is an explicit override for new users: if your account is fresh and you follow at least the minimum number of accounts, the OON penalty is softened with a more permissive factor. This is the bootstrap mechanism that gets new users a populated For You feed before they have generated enough engagement history for Phoenix to personalise around.

The author diversity damper

Once posts are sorted, the Author Diversity Scorer runs. It applies an exponential attenuation to repeated authors:

“ multiplier(position) = (1 - floor) × decay^position + floor “

The first post from an author keeps its full score. The second is attenuated. The third more so. A floor value prevents an author from being attenuated below a minimum. The decay and floor are tunable parameters, not constants. The practical consequence is that posting fifteen times in twenty minutes does not produce fifteen top-of-feed impressions. It produces one or two.

What Grox does (and why it is the most important new piece)

The grox/ directory is new in May 2026 and is the single most underreported part of the update. It is an asynchronous task-execution engine that runs content classifiers and embedders on every post. Among the tasks present in the repo:

- Spam detection, including a low-follower reply-spam classifier

- Safety policy classification across categories like violent media, adult content, hate, self-harm, and platform-policy violations

- A “post safety screen deluxe” pipeline that re-checks adult content classification with a second pass

- Media classification for images and video

- Multimodal post embedding for retrieval and ranking

Grox does not directly score posts. It produces labels. Those labels are consumed by the visibility filter (VFFilter) at the post-selection stage and by the brand-safety hydrator that ad placement uses. The effect is that posts the system thinks are spam, policy-violating, or unsafe for ads next to do not get removed entirely. They get de-amplified before they ever reach the Phoenix ranking step, or filtered after.

If you have wondered why a perfectly reasonable post sometimes mysteriously underperforms, this is the likely culprit. A Grox classifier flagged something.

What the algorithm explicitly does not boost

This is worth saying twice because the rumour mill keeps repeating the opposite. In the open source code:

- There is no verified-badge boost in the scorer.

- There is no follower-count boost.

- There is no link penalty. Links are not separately weighted.

- There is no freshness boost. The age filter removes posts above a threshold but does not score newer posts higher.

- Subscriber status is used for filtering paywalled content, not for boosting reach.

If verification, Premium, or any of these correlate with reach in practice, it is because the Phoenix transformer has learned to predict that users engage with those posts more, not because a hand-written rule said so. The whole point of the architecture, per the repo, is that every such heuristic has been removed and the model learns the signal from your engagement sequences.

How ads get inserted

Ads ride along through the same pipeline. The blender requires at least five organic posts before an ad can be placed. It computes a spacing interval, partitions candidate ads by brand-safety verdict, and caps the number of ads from the safe set to roughly half the safe-set size. A second layer of contextual checks drops ads when neighbouring posts have a weak brand-safety rating, a conflicting handle, or a keyword collision. The result is an interleaved feed that tries to keep brand-safety risk down without starving the auction.

How creators should post for the 2026 algorithm

Stop optimising for proxies. Optimise for the actions Phoenix is actually predicting:

- Write for replies and reposts, not for likes. A like is one positive weight. A reply, a repost, a quote, and a follow are each separately weighted on top. Posts that ask a question, take a stance, or offer a frame for someone else to argue with consistently outperform posts that close a thought.

- Aim for dwell. A long-form thread, a clear photo, or a video that people watch to the end gets two positive signals: dwell and continuous dwell time. A one-line post you scroll past in a quarter-second gets neither.

- Earn the follow. Follow-author is a predicted action with its own weight. A post that successfully sells a new viewer on hitting follow scores more than a post that doesn’t.

- Do not cluster. Author Diversity attenuates your second and third posts inside the same scoring window. If you have three things to say, space them out by hours, not minutes.

- Avoid anything that gets you muted, blocked, or reported. Those carry explicit negative weights. Engagement bait that produces a single block does measurable damage to the score of that post and any signal it sends about the author.

- Do not be Grox-flagged. Spam-shaped behaviour (reply-bombing with the same line, posting at high frequency with a low follower count, low-quality media) gets you classified by Grox before you ever reach the scorer.

- Follow more accounts in your niche. Phoenix Retrieval is similarity-based, but the OON penalty means in-network candidates still have a head start. The denser your in-network graph in your niche, the more likely your posts surface there.

- Build an engagement history that Phoenix can recognise. The user tower encodes your recent engagement. If you want your content to surface to people who like topic X, engage like a person who likes topic X. The model will learn to send your posts to that cluster.

- Lean into video and photo. Photo expand and video view are both separately weighted positive actions. They give a single post more ways to score.

- DM-share-worthiness is a quiet superpower. Share via DM and share via copy link are each their own weighted action. A post worth sending to a specific friend is, mechanically, a higher-scoring post than a post merely worth liking.

Can I run the algorithm locally?

Yes. With the May 15 release, the runnable inference path is phoenix/run_pipeline.py, and the bundled mini Phoenix checkpoint is enough to score sample posts. You can clone the repo, pull the LFS archive, and watch the pipeline rank a batch end to end. This is, as far as we know, the first time a production-scale social recommendation system has shipped a runnable inference path to the public.

What is next

Two trends are worth watching. The first is the cadence: xAI has been pushing material updates every few weeks. Expect the action weights, retrieval ratios, and Grox classifier set to keep moving. The second is the architecture: candidate sources for “Phoenix MoE” and “Phoenix Topics” suggest the next direction is multiple specialised ranking experts rather than a single transformer, with topic awareness fed in explicitly. Promptable feeds (telling X in natural language what you want more of) are the user-visible end of that trend.

The closing point is the practical one. The 2026 For You algorithm is, more than any version before it, a measurement of how people respond to your post. Strategies that try to game routing, freshness, or format are landing in a system that does not care about those things directly. Strategies that earn replies, holds, follows, and shares are landing in a system that is built, end to end, to reward exactly that.