Marc Andreessen sat down with David Senra for a nearly two-hour conversation that covered everything from caffeine-induced heart palpitations to the structural collapse of managerialism, Elon Musk’s radical management system, and why the greatest entrepreneurs in history share one counterintuitive trait: they don’t look inward.

This is one of the most information-dense podcast conversations of 2025. Here’s everything worth knowing from it.

TL;DR

Marc Andreessen believes introspection is a trap. The greatest founders, from Sam Walton to Elon Musk to Mark Zuckerberg, don’t dwell on the past or second-guess themselves. They just build. In this wide-ranging conversation with David Senra, Andreessen lays out his worldview on founders vs. managers, explains how he and Ben Horowitz modeled a16z after Hollywood talent agency CAA and JP Morgan’s merchant banking model, tells the origin story of Mosaic and Netscape, argues that moral panics about new technology are a pattern as old as written language, and makes a case that Elon Musk has invented an entirely new school of management that may be the least studied and most important organizational innovation in the world today.

Key Takeaways

1. Zero Introspection Is a Founder Superpower

Andreessen opens the conversation by declaring he has “zero” introspection, and he says it like it’s a badge of honor. His reasoning is straightforward: people who dwell on the past get stuck in the past. He traces the entire modern impulse toward self-examination back to Freud and the Vienna-based psychoanalytic movement of the 1910s and 1920s, calling it a manufactured construct that would have been unrecognizable to history’s great builders. Christopher Columbus, Alexander the Great, Thomas Jefferson, Henry Ford: none of them were sitting around in therapy.

Andreessen links this trait to the personality dimension of neuroticism, noting that many of the best founders he’s backed score essentially zero on that scale. They just don’t get emotionally derailed. That said, he acknowledges that some outstanding entrepreneurs are in fact quite neurotic. It’s a nice-to-have, not a prerequisite.

2. Psychedelics Are Draining Silicon Valley of Its Best Talent

One of the more provocative segments: Andreessen describes a pattern he’s observed repeatedly in Silicon Valley where high-performing founders get overwhelmed, discover psychedelics, have a transformative experience, and then quit their companies to become surf instructors in Indonesia. He brought this complaint to Andrew Huberman, who gave him a characteristically wise response: how do you know they aren’t happier now? Maybe the thing driving them to build was actually deep insecurity, and the psychedelics simply resolved it.

Andreessen’s response is honest and funny: “Yeah, but their company is failing.” He and Senra both agree they aren’t willing to risk whatever is on the other side of that door. Daniel Ek of Spotify gets a shoutout here. Senra cites Ek’s philosophy that the best entrepreneurs don’t optimize for happiness, they optimize for impact.

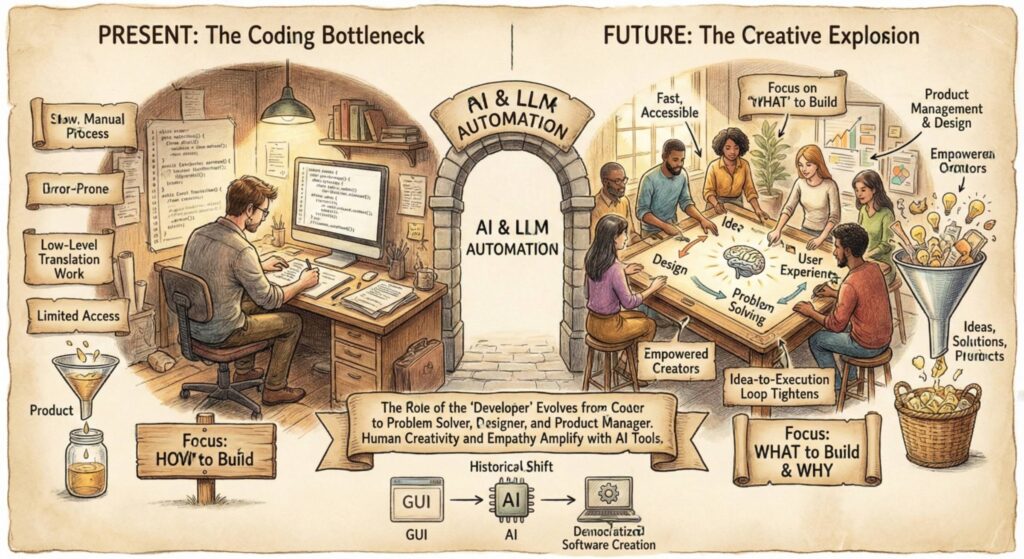

3. The Founder vs. Manager Debate Is the Central Tension of Modern Capitalism

This is the intellectual core of the conversation. Andreessen draws heavily on James Burnham’s 1941 book The Machiavellians to frame two competing models of organizational leadership that have existed throughout the history of capitalism.

The first is what Burnham called “bourgeois capitalism,” where the founder runs the company, their name is on the door, and they drive the thing forward through sheer force of will. Henry Ford in the 1920s. Elon Musk today. This was the norm for thousands of years across business, government, religion, and military conquest.

The second is “managerialism,” the rise of the professional manager as a distinct class, trained at business schools, and treated as interchangeable across industries. This model emerged between the 1880s and 1920s and eventually produced the conglomerate era of the 1970s, where the premise was that a sufficiently skilled manager could run any business regardless of domain expertise.

Andreessen’s argument is that Burnham’s thesis has collapsed. Managers are fine when nothing changes, when soup is soup and banks are banks. But the moment the environment shifts, managerial training is useless. SpaceX is the clearest example: imagine being a professionally trained manager at a legacy rocket company when a “crazy guy in California” figures out how to land rockets on their tail. Your MBA isn’t going to help.

The a16z founding thesis, then, is essentially this: it’s much more likely that you can take a founder and teach them to manage at scale than take a manager and teach them to be a founder. That insight has only gotten stronger over time as manager-led institutions across the West lose trust and credibility because they can’t adapt.

4. How a16z Was Built: The CAA Playbook and the Barbell Theory

Before starting a16z, Andreessen and Horowitz spent a year and a half studying how other relationship-driven industries had evolved, including private equity, hedge funds, investment banks, law firms, advertising agencies, management consultancies, and Hollywood talent agencies.

Their key structural insight was what they call the “barbell” or “death of the middle.” In industry after industry, they saw the same pattern: the middle-market firms collapse, and what survives is either ultra-lean boutique operators on one side or scaled platforms with massive networks and deep resources on the other. Department stores like Sears and JCPenney died, replaced by Gucci stores (boutique) and Amazon (scale). Mid-market investment banks disappeared while Allen & Company (boutique, founded in the 1920s, deliberately stayed small) and Goldman Sachs / JP Morgan (scaled) survived.

The same thing had happened in private equity (KKR scaling up while solo operators stayed small), hedge funds, and advertising (the story arc of Mad Men literally dramatizes this process).

In venture capital circa 2009, every firm was still operating as a “tribe of lone wolves.” Partners didn’t collaborate. Secretly, many didn’t even like each other. They were all fighting for bigger slices of what they perceived to be a fixed pie. Generational succession was failing. Andreessen and Horowitz decided to build the first scaled venture platform.

The most direct inspiration came from Michael Ovitz and CAA. When Ovitz started CAA in 1975, Hollywood talent agencies were collections of independent agents. Your agent knew who they knew, and nobody else at the firm was available to help you. Ovitz changed everything. He had his team meeting at 7am instead of the industry-standard 9am, made calls by 8am (two hours before competitors), and called not just his own clients but other agencies’ clients too. The compounding effect was devastating to competitors who were still running on decades-old assumptions.

5. The Origin Story of Mosaic, Netscape, and the Commercial Internet

Andreessen provides a detailed firsthand account of building Mosaic at the University of Illinois, the first graphical web browser, and then co-founding Netscape with Jim Clark. A few highlights that rarely get told:

The internet was literally illegal to commercialize. The NSF’s “acceptable use policy” prohibited commercial activity on the network. Andreessen personally served as tech support for Mosaic, fielding emails from users who thought their CD-ROM tray was a cup holder. He created a deliberately ambiguous commercial licensing form and watched 400+ commercial licensing requests pile up. That was the signal that there was a real business.

He met Jim Clark at a legendary dinner at an Italian restaurant in Palo Alto with a dozen potential recruits. Andreessen was the only one who said yes. He also got so drunk on red wine (his first time drinking it) that he ripped the entire front end off his new car pulling out of the parking garage.

The conversation also covers the concept of “Eternal September,” the moment in September 1993 when AOL connected its two million users to the internet, permanently transforming it from an ivory-tower utopia of the world’s smartest people into the mainstream consumer platform we know today.

6. Jim Clark Was the Elon Musk of the Early ’90s

Andreessen gives a vivid portrait of Jim Clark, the founder of Silicon Graphics, who had the vision to predict both the GPU revolution (what became Nvidia) and the networked computing revolution (what became the internet) years before anyone else. Clark was volatile, brilliant, and charismatic. He tried to push SGI to build a consumer graphics chip and to pursue networked computing, but the professional CEO the VCs had installed wouldn’t budge. So Clark left and started Netscape.

The Clark story maps perfectly onto Andreessen’s founders-vs.-managers thesis. Silicon Graphics was an incredible company, but it was the founder (Clark) who saw the future, and the manager who refused to act on it. The company that capitalized on Clark’s vision of putting 3D graphics on a cheap chip was Nvidia, which had to be a new company because SGI’s management wouldn’t go there.

7. The Two Jims: How Andreessen Got His Dual Education

Andreessen says his formative training came from two mentors who were “polar opposites”: Jim Clark (the ultimate founder archetype) and Jim Barksdale (the ultimate professional manager, who had run parts of IBM, AT&T, and FedEx before becoming Netscape’s CEO).

Clark represented the “will to power” founder mentality, a fountain of creativity who would bludgeon the world into accepting his ideas. Barksdale represented operational discipline: systematizing, scheduling, building processes. The key was that Barksdale never shut down the innovation; he channeled it. One of the best anecdotes: Clark got heated during a staff meeting about wanting to pursue a new idea, and Barksdale pulled him aside and defused the tension with a perfectly timed Mississippi drawl one-liner that had Clark laughing. They got along great from that point forward.

Andreessen sees himself and Ben Horowitz as a modern version of this dynamic, with Andreessen playing more of the Clark role (fountain of ideas) and Horowitz playing more of the Barksdale role (operational discipline), though both mix it up.

8. Moral Panics Are a Permanent Feature of Human Civilization

Andreessen runs through a history of technology-driven moral panics that stretches across millennia: Plato and Socrates arguing that written language would destroy oral knowledge transmission. The printing press. Playing cards. Novels. Bicycles (which produced the incredible “bicycle face” panic, where young women were warned that the physical exertion of cycling would freeze their faces in an ugly expression, permanently ruining their marriage prospects). Jazz. Rock and roll. Elvis Presley being filmed from the waist up. Comic books. The Walkman. Calculators. Dungeons & Dragons. Heavy metal. Hip-hop (Jimmy Iovine was literally compared to mustard gas in congressional hearings). The early internet.

The point isn’t that technology doesn’t change society. It does. The point is that the panicked, apocalyptic reaction is the same every single time, and it has never been correct at the catastrophic level predicted.

9. Edison Didn’t Know What the Phonograph Would Be Used For, and Neither Do AI Inventors

Andreessen tells a favorite story: Thomas Edison invented the phonograph fully expecting it would be used for families to listen to religious sermons at home after a long day of work. Instead, people immediately used it for ragtime and jazz music, which horrified Edison. The lesson is that the inventors of a technology are often the least qualified people to predict its long-term societal implications, because they’re too buried in the technical specifics. He applies this directly to AI, specifically calling out Geoffrey Hinton as “an actual capital-S socialist” whose prediction that AI will cause mass unemployment requiring universal basic income is really just his pre-existing political ideology dressed up as technological forecasting.

10. Elon Musk Has Invented a New School of Management

The final major section is Andreessen’s detailed breakdown of what he calls Elon Musk’s management method, which he says may be the “least studied and understood thing” in the world right now, despite clearly producing the best results of any organizational method operating today.

The method has several key components:

Bypassing the management stack. Andreessen draws a contrast with IBM in the late 1980s, where he worked as an intern. IBM had 12 layers of management between the lowest employee and the CEO. Each layer lied to the one above it to look good. After 12 rounds of compounding lies, the CEO had absolutely no idea what was happening in his own company. IBM even had an internal term for this: “the big gray cloud,” the entourage of executives in gray suits who followed the CEO everywhere and prevented him from ever speaking to anyone actually doing the work. Musk does the exact opposite: he goes directly to the engineer working on the problem and sits down to solve it with them.

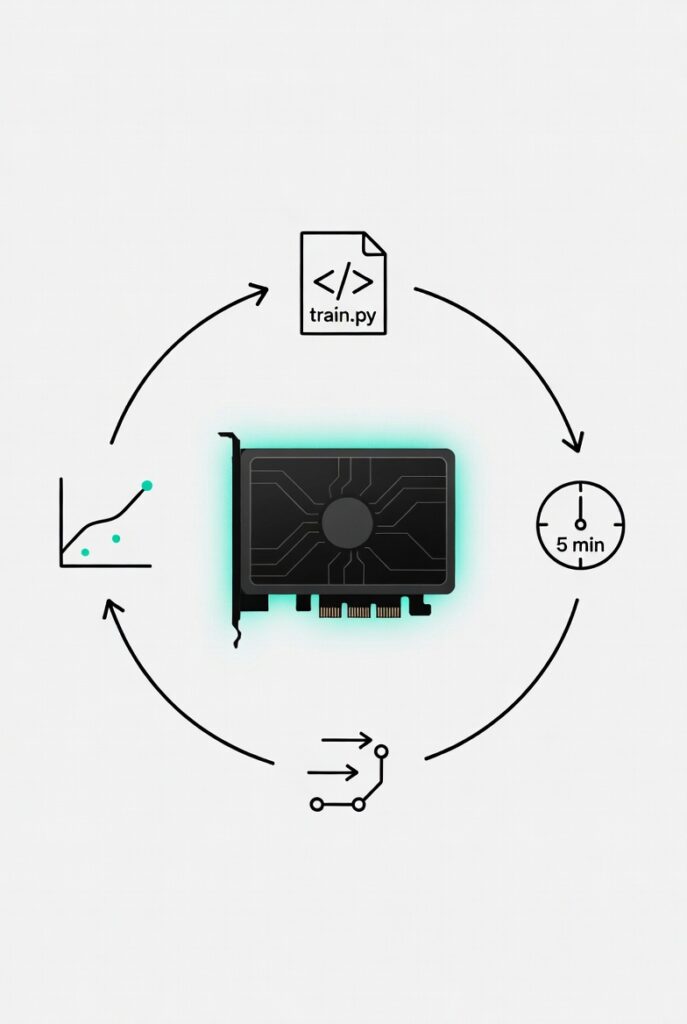

Bottleneck-first thinking. Musk runs each of his companies as a production process. Every week, he identifies the single biggest bottleneck in each company’s production pipeline. Then he personally goes and fixes that bottleneck with the responsible engineer. At Tesla, this means he’s resolving the critical production bottleneck 52 times a year, personally. Legacy automaker CEOs are not doing anything remotely comparable.

120 design reviews per day. Musk does approximately one full day per week at each company, running 12-14 hour stretches of design reviews at five minutes per engineer. That’s roughly 12 reviews per hour, 120 per day. Each review identifies whether the project is on track, and if not, whether the problem is the production bottleneck. If it is, that’s where Musk spends the rest of the night, sometimes until 2am, working hands-on with the engineer to fix it.

Maneuver warfare speed. Andreessen compares Musk’s operating tempo to “maneuver warfare,” the military doctrine of acting faster than the opponent can react. Where a normal company might take six months to solve a production problem, Musk solves it in four hours. The cycle time gap is so massive it’s almost incomparable.

Shocking competence through selection pressure. Someone Andreessen knows described joining SpaceX as “being dropped into a zone of shocking competence.” Two forces create this: Musk rapidly identifies and fires underperformers (which he can do because he’s personally talking to the people doing the work), and the world’s best engineers actively want to work for him because he’s the only CEO who can work alongside them as a genuine technical peer. What engineer wouldn’t want to design a rocket engine with Elon Musk as their engineering partner?

Andreessen introduces a half-serious, half-brilliant metric for founders: the “milli-Elon.” One milli-Elon is one-thousandth of Elon Musk’s founder capacity. Ten milli-Elons would be fantastic. A hundred, meaning 10% of an Elon, would get you all the money in the world. Most people, he says, are operating at about one milli-Elon or 0.1 milli-Elons.

11. Starlink Is the Craziest Side Project in Business History

Andreessen ends the Musk discussion by noting that Starlink, now with over 10 million subscribers, is essentially a side project at SpaceX. Two previous attempts at satellite-based internet (Teledesic, backed by Bill Gates and Craig McCaw, and Motorola’s Iridium) were catastrophic failures and classic business school case studies in capital destruction. Musk looked at that track record and said he’d do attempt number three as a side project, using the logic that if SpaceX’s reusable rockets were going to be launching constantly, they might as well carry their own satellites providing consumer-priced internet access. The idea was considered insane by anyone who knew the history. And of course, it worked.

Thoughts

There’s a reason this conversation hit so hard. Andreessen isn’t just sharing opinions. He’s connecting a mental model of organizational theory that spans JP Morgan’s 1880s merchant bank, Michael Ovitz’s 1975 Hollywood disruption, James Burnham’s 1941 political theory, IBM’s 1989 collapse, and Elon Musk’s 2025 management operating system into a single coherent framework. Very few people have both the lived experience and the historical knowledge to draw those connections, and even fewer can articulate them this clearly in real time.

The “zero introspection” thesis is going to bother a lot of people, and it should be provocative. But the nuance is there if you listen carefully. Andreessen isn’t saying self-awareness is bad. He’s saying that the specific mode of backward-looking, guilt-driven rumination that modern therapeutic culture encourages is antithetical to the builder personality type. The great founders aren’t unaware. They’re relentlessly forward-oriented.

The founder vs. manager framework is the most underrated idea in business strategy right now. It explains why so many legacy institutions are failing simultaneously, not because the people running them are dumb, but because the managerial class was optimized for stability in a world that no longer rewards it. When the environment changes, and it’s changing faster than ever, the only people equipped to respond are founders.

The Elon Musk management breakdown alone is worth the entire conversation. The concept of identifying and personally fixing the critical production bottleneck every single week, for every company, by going directly to the engineer rather than through layers of management, is so simple it’s almost embarrassing that no one else does it. But that’s Andreessen’s point: almost no one can do it, because it requires a CEO who is simultaneously a world-class manager and a world-class technologist. That combination barely exists.

If you’re a founder, operator, or anyone trying to build something that matters, this is required listening.