Subquadratic, the AI infrastructure company behind subq.ai, just emerged from stealth with a $29M seed round and a claim that should make every AI engineer pay attention: they have built the first large language model whose compute scales linearly, not quadratically, with context length. The result is SubQ, a frontier model with a 12 million token context window, roughly 50x lower cost than leading frontier models at 1M tokens, and benchmark numbers that put it ahead of Gemini 3.1 Pro, Claude Opus 4.6/4.7, and GPT-5.4/5.5 on key long-context tasks. This is a deep, opinionated breakdown of everything Subquadratic has published so far, who is behind it, why a sub-quadratic architecture matters, and what changes for developers, agents, and enterprise AI if the numbers hold up.

TLDR

Subquadratic is a Miami-based frontier AI lab that launched on May 5, 2026 with $29M in seed funding and a new LLM called SubQ. SubQ is the first fully sub-quadratic LLM, meaning attention compute grows linearly with context length instead of quadratically. The model offers a 12M token context window, around 150 tokens per second, roughly one-fifth the cost of leading frontier models, 95% accuracy on RULER 128K, 92% accuracy at the full 12M tokens, and the company is targeting 100M tokens by Q4 2026. Two products are launching in private beta: SubQ API (OpenAI-compatible, streaming, tool use) and SubQ Code (a CLI coding agent that plugs into Claude Code, Codex, and Cursor to load entire repositories into a single context window).

Key Takeaways

- SubQ is the first fully sub-quadratic LLM, with attention compute scaling at O(n) instead of the transformer’s O(n²).

- The context window is 12 million tokens, enough to fit the entire Python 3.13 standard library (around 5.1M tokens) or roughly 1,050 React pull requests (around 7.5M tokens) in a single prompt.

- At 12M tokens, SubQ reduces attention compute by almost 1,000x compared to other frontier models.

- Pricing benchmarks: 95% accuracy on RULER 128K at $8 of compute, versus 94% accuracy at roughly $2,600 on Claude Opus, a 260x to 300x cost reduction.

- Speed: about 150 tokens per second.

- Cost: roughly 1/5 of other leading LLMs at 1M tokens, more than 50x cheaper according to launch coverage.

- Two products in private beta: SubQ API (12M token window, streaming, tool use, OpenAI-compatible endpoints) and SubQ Code (one-line install CLI for coding agents, ~25% lower bills, 10x faster exploration, auto-redirects expensive model turns).

- SubQ Code integrates with Claude Code, Codex, and Cursor, positioning Subquadratic as the long-context infrastructure layer beneath existing agent workflows rather than a competing chat product.

- Architecture: a fully sub-quadratic sparse-attention design that learns which token relationships actually matter and skips the rest, redesigned from first principles.

- Funding: $29M seed led by investors including Javier Villamizar (former SoftBank Vision Fund partner) and Justin Mateen (Tinder co-founder, JAM Fund), alongside early investors in Anthropic, OpenAI, Stripe, and Brex.

- Founders: Justin Dangel (CEO, five-time founder) and Alex Whedon (CTO, ex-Meta engineer, former Head of Generative AI at TribeAI). Research team includes PhDs from Meta, Google, Oxford, Cambridge, and BYU.

- Headcount is 11 to 50, headquartered in Miami, Florida, with active hiring for API engineering, developer advocacy, product design, sales, and people operations.

- Tagline and thesis: “Efficiency is Intelligence.” The company argues that quadratic attention has been the real ceiling on AI applications, and breaking it unlocks workloads that were previously cost-prohibitive or architecturally impossible.

Detailed Summary

What is Subquadratic and what is SubQ?

Subquadratic is a frontier AI research and infrastructure company. Their public homepage is intentionally minimal, with the single line “Efficiency is Intelligence.” and a contact email at [email protected]. The full product story lives on the launch demo site, where the company introduces SubQ as the first model built specifically for long-context tasks. The pitch is direct: SubQ is a sub-quadratic LLM built for 12M-token reasoning, allowing agents to work across full repositories, long histories, and persistent state without quality loss.

Three numbers dominate the marketing copy. Context: 12M token reasoning. Speed: 150 tokens per second. Cost: one-fifth of other leading LLMs. Those three numbers, taken together, are why this launch matters. Until now, you could optimize for one of the three at a time. SubQ claims to push all three at once because the underlying architecture changed, not because the company applied better quantization or smarter caching on top of a transformer.

The architecture: why “sub-quadratic” is the whole story

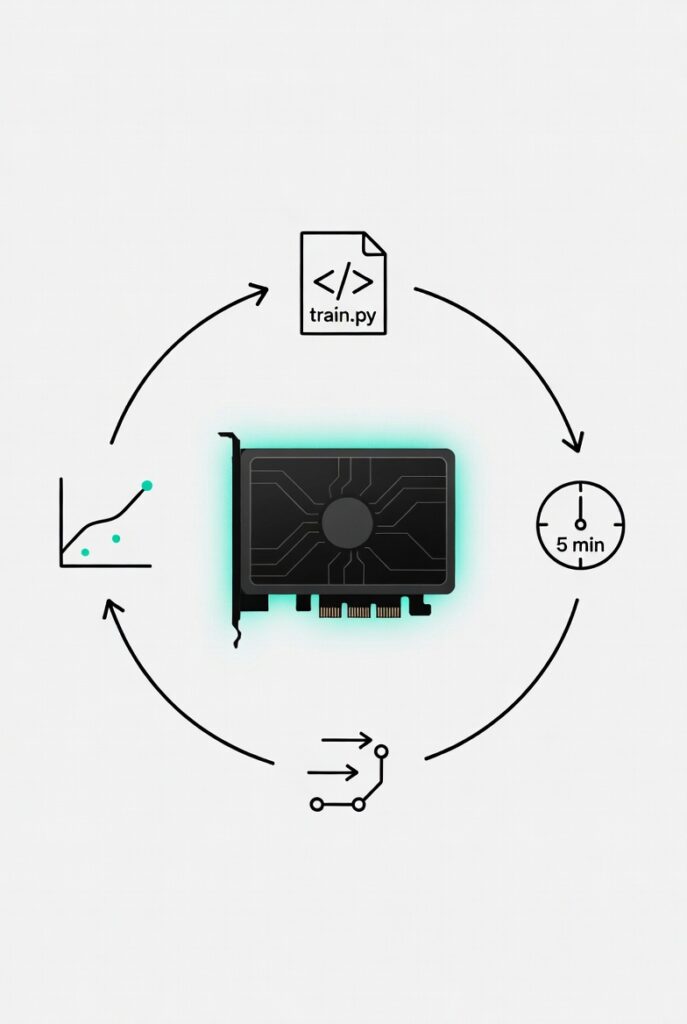

Standard transformers, the architecture behind ChatGPT, Claude, Gemini, and almost everything else, use dense self-attention. Every token compares itself to every other token, which means compute scales as O(n²) in the context length n. Double the context, quadruple the compute. That single property is the reason context windows are usually capped at 128K tokens for open models and around 1M tokens for the most aggressive frontier offerings, and it is the reason most production AI systems lean on retrieval-augmented generation, chunking, agentic retrieval, and prompt engineering tricks to dodge the cost curve entirely.

SubQ is built on a fully sub-quadratic sparse-attention architecture, redesigned from first principles. The argument from co-founder and CEO Justin Dangel is that LLMs waste compute by processing every possible token-to-token relationship when only a small fraction of those relationships actually matter for the task. SubQ learns to find and focus only on those relevant relationships, which is what brings the scaling behavior down from O(n²) to O(n). At 12M tokens, this design cuts attention compute by almost 1,000x compared to other frontier models. The research community has been chasing this for years through linear attention, state space models, Mamba, and various sparse attention variants. According to Subquadratic, the unsolved problem was never the idea, it was building a sub-quadratic architecture that did not sacrifice frontier-level accuracy. That is what their team spent the time on.

The benchmarks

Subquadratic published a benchmark table comparing a SubQ 1M-Preview against Gemini 3.1 Pro, Claude Opus 4.6, Claude Opus 4.7, GPT-5.4, and GPT-5.5 across SWE-Bench Verified (real-world software engineering), RULER at 128K (long-context accuracy across 13 tests), and MRCR v2 8-needle at 1M (multi-round coreference resolution).

- SWE-Bench Verified: SubQ scores 81.8%, ahead of Gemini 3.1 Pro at 80.6% and Opus 4.6 at 80.8%, with Opus 4.7 leading at 87.6%.

- RULER at 128K: SubQ scores 95.0%, narrowly ahead of Opus 4.6 at 94.8% (internally evaluated). Other vendors did not report this benchmark.

- MRCR v2 8-needle, 1M: SubQ scores 65.9%, behind Opus 4.6 at 78.3% and GPT-5.5 at 74.0%, but well ahead of GPT-5.4 at 36.6%, Opus 4.7 at 32.2%, and Gemini 3.1 Pro at 26.3%.

- The launch blog post adds that on RULER 128K, SubQ scored 97% accuracy at $8 of compute, versus 94% on Claude Opus at roughly $2,600. That is a cost reduction of about 260x at superior accuracy.

- On MRCR v2 specifically, the launch post lists SubQ at 83, Claude Opus at 78, GPT-5.4 at 39, and Gemini 3.1 Pro at 23.

- At the full 12M token context, SubQ hits 92% on RULER while other frontier models reportedly break down well before reaching their stated 1M-token limit.

- Subquadratic notes the SubQ results are third-party validated and a full technical report is forthcoming.

The story these numbers tell is consistent: SubQ is competitive on traditional benchmarks like SWE-Bench, decisively better on long-context retrieval where compute economics dominate, and dramatically cheaper to run when the workload actually exercises a long context.

The two products: SubQ API and SubQ Code

SubQ ships in two flavors. The first is SubQ API, the full-context API for developers and enterprise teams. It exposes the 12M token context window, supports streaming and tool use, and uses OpenAI-compatible endpoints so existing client libraries and orchestration code can be repointed with minimal change. The product positioning is to process full repositories and pipeline states in a single API call at linear cost, rather than chunking inputs and stitching results.

The second is SubQ Code, a long-context layer designed specifically for coding agents. Instead of competing with Claude Code, Codex, or Cursor, SubQ Code plugs into them. It maps codebases, gathers context, and answers token-heavy questions faster than the host agent’s default model. According to Subquadratic, the integration delivers roughly 25% lower bills and around 10x faster exploration, auto-redirects the most expensive model turns to SubQ, and installs in a single line. The design implication is that agent builders do not have to switch ecosystems to benefit from a 12M token window. They keep their preferred agent and offload the heavy long-context work to SubQ.

Both products are in private beta. Access is gated through a request early access form where applicants choose SubQ Code, SubQ API, or both, and provide context about their workload.

What 12M tokens actually unlocks

Subquadratic illustrates the size of the context window with two concrete examples. The entire Python 3.13 standard library is roughly 5.1M tokens, well under the limit. Six months of React pull requests, around 1,050 PRs against the React codebase, comes in around 7.5M tokens, also under the limit with room to spare. At this scale, the standard pattern of curating which files or chunks the model gets to see goes away. The model just sees everything.

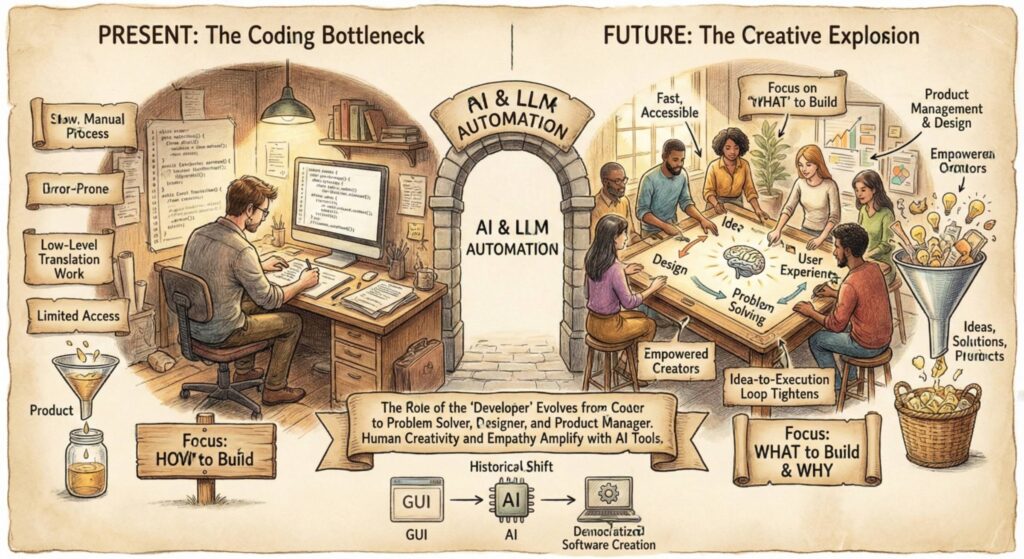

The downstream implications are significant. RAG pipelines, embedding stores, chunking heuristics, and multi-agent coordination layers exist primarily to compensate for short context windows and quadratic compute. If a model can ingest the whole corpus in one pass at linear cost, large parts of that workaround stack become optional. Long-running agents can preserve full state instead of summarizing it. Coding agents can reason about a refactor across an entire repository without juggling tool calls. Document-heavy workflows in legal, finance, and research can run on the source material directly. And once Subquadratic hits its 100M token target by Q4 2026, the design space shifts again toward applications that depend on persistent state and long time horizons.

The economic argument

Subquadratic’s framing is that cost has become the binding constraint on AI deployment, not capability. Many ideas never reach production because the unit economics do not work out. Quadratic attention is the structural reason for that. By breaking the scaling law, SubQ aims to make previously cost-prohibitive workloads viable at scale: high-volume inference, longer included context, and applications that rely on sustained interaction with the model. The 260x to 300x cost reduction reported on RULER 128K is the headline number that operationalizes this thesis.

The team and the funding

Subquadratic raised $29M in seed funding. Investors include Javier Villamizar, former partner at SoftBank Vision Fund, and Justin Mateen, co-founder of Tinder and founder of JAM Fund, alongside early investors in Anthropic, OpenAI, Stripe, and Brex. CEO Justin Dangel is a five-time founder with prior companies in health tech, insurance tech, and consumer goods. CTO Alex Whedon previously worked as a software engineer at Meta and led over 40 enterprise AI implementations as Head of Generative AI at TribeAI. The research team is built around PhDs and published researchers from Meta, Google, Oxford, Cambridge, and BYU. The company is headquartered in Miami, Florida, with a headcount in the 11 to 50 range.

Public hiring lists show the company is staffing across API engineering, founding developer advocacy, principal full-stack engineering, technical copywriting, account executive roles for enterprise sales, senior product design for the Voice AI and API surface, and head of people and talent operations. The Voice AI mention is notable because the public homepage at subq.ai still references a Speech-To-Text API as a current product, suggesting Subquadratic is operating across both speech and language with the same architectural thesis.

The site itself

The current public site at subq.ai is deliberately spartan. Visitors see only the company name, the line “Efficiency is Intelligence.”, and a contact email. The full marketing surface lives at the launch demo URL, which acts as the de facto homepage for the launch and links out to the request early access flow, the introducing SubQ blog post, the LinkedIn page, the X account, the Discord community, careers, press contact at [email protected], terms of use, privacy policy, cookies policy, and acceptable use policy. The structure makes sense for a private beta launch: keep the apex domain minimal, push announcement traffic to a dedicated launch site, and gate product access behind a form.

Thoughts

The interesting part of Subquadratic’s pitch is not the context window. It is the implicit claim that the entire workaround economy built around transformers, RAG vendors, vector databases, chunking middleware, agentic retrieval frameworks, context compression startups, was always a tax paid because of one architectural property: O(n²). If SubQ’s numbers hold up under independent scrutiny, a meaningful slice of that ecosystem becomes optional rather than mandatory. That has product, infrastructure, and venture implications that go well beyond a faster, cheaper LLM.

The product strategy is also notably humble in a smart way. Subquadratic is not trying to win the consumer chat war against ChatGPT, Claude, or Gemini. SubQ Code is positioned as a layer underneath Claude Code, Codex, and Cursor, and the API is OpenAI-compatible. That is a classic infrastructure play: do not ask developers to abandon their tools, just route the expensive long-context turns to you. The “auto-redirects expensive model turns” framing is essentially a routing economic argument aimed at agent builders who already feel the pain of paying frontier prices for high-token requests.

There are open questions worth holding lightly. The MRCR v2 numbers in the public benchmark table show SubQ behind Opus 4.6 and GPT-5.5, even as the launch post emphasizes a higher relative score. The cost comparisons rely on a specific compute basis that the upcoming technical report will need to spell out. And the gap between strong RULER scores at 128K and the 92% claim at 12M tokens is a long way to extrapolate without external replication. None of this is unusual for a launch, but it is the right place to apply pressure once the technical report drops.

The bigger architectural bet is the one that should hold attention. If sub-quadratic attention done well genuinely matches frontier accuracy, then context length stops being a meaningful product axis and a generation of brittle infrastructure built around context limits gets reconsidered. Subquadratic is making the strongest public case so far that the post-transformer era starts with attention scaling, not parameter count. The next twelve months, the technical report, third-party benchmarks, and the first real production deployments through SubQ Code, will tell us whether this is the inflection point or another promising direction that does not quite cross the line. Either way, “Efficiency is Intelligence” is the right frame for where AI economics are heading, and Subquadratic is one of the few companies whose architecture is consistent with the slogan.