Artificial intelligence is about to hit an energy wall. As data centers devour gigawatts to power models like GPT-4, the cost of computation is scaling faster than our ability to produce electricity. Extropic Corporation, a deep-tech startup founded three years ago, believes it has found a way through that wall — by reinventing the computer itself. Their new class of thermodynamic hardware could make generative AI up to 10,000× more energy-efficient than today’s GPUs:contentReference[oaicite:0]{index=0}.

From GPUs to TSUs: The End of the Hardware Lottery

Modern AI runs on GPUs — chips originally designed for graphics rendering, not probabilistic reasoning. Each floating-point operation burns precious joules moving data across silicon. Extropic argues that this design is fundamentally mismatched to the needs of modern AI, which is probabilistic by nature. Instead of computing exact results, generative models sample from vast probability spaces. The company’s solution is the Thermodynamic Sampling Unit (TSU) — a chip that doesn’t process numbers, but samples from probability distributions directly:contentReference[oaicite:1]{index=1}.

TSUs are built entirely from standard CMOS transistors, meaning they can scale using existing semiconductor fabs. Unlike exotic academic approaches that require magnetic junctions or optical randomness, Extropic’s design uses the natural thermal noise of transistors as its source of entropy. This turns what engineers usually fight to suppress — noise — into the very fuel for computation.

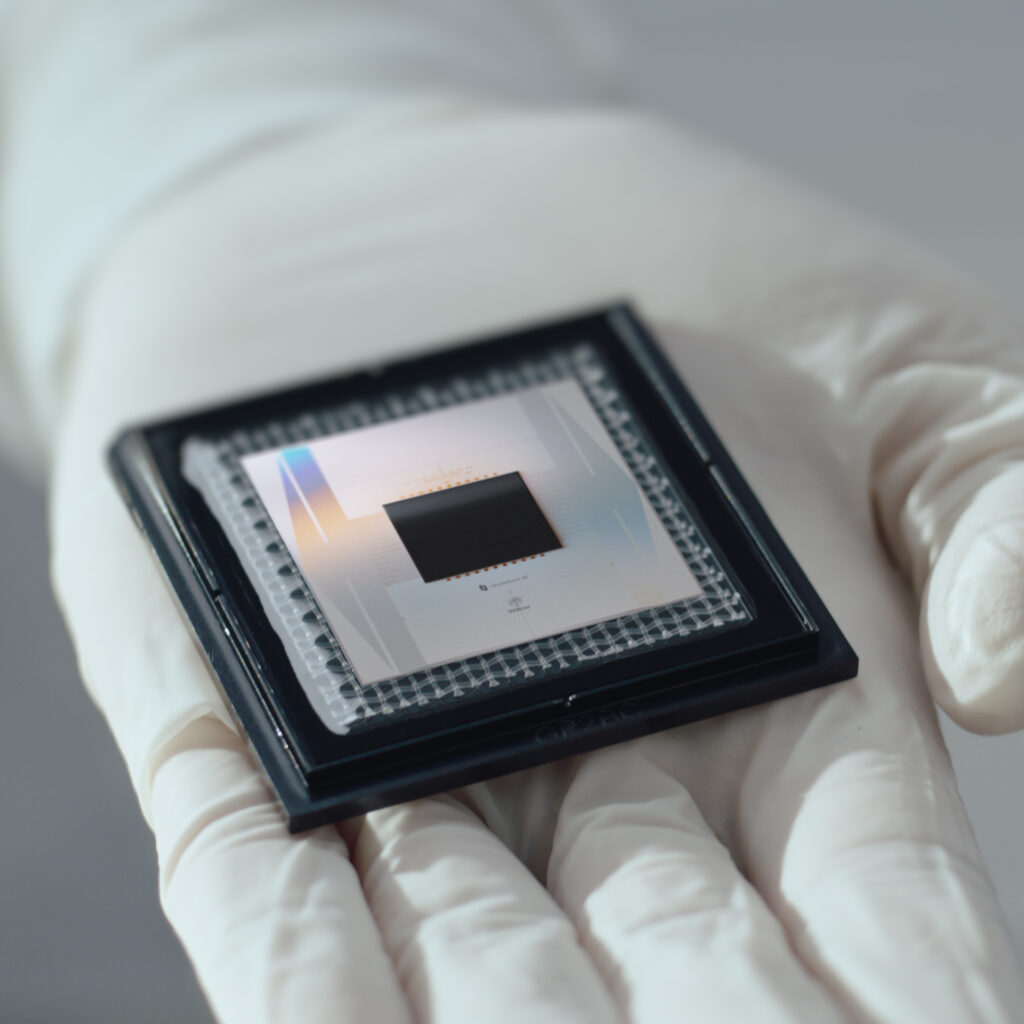

X0 and XTR-0: The Birth of a New Computing Platform

Extropic’s first hardware platform, XTR-0 (Experimental Testing & Research Platform 0), combines a CPU, FPGA, and sockets for daughterboards containing early test chips called X0. X0 proved that all-transistor probabilistic circuits can generate programmable randomness at scale. These chips perform operations like sampling from Bernoulli, Gaussian, or categorical distributions — the building blocks of probabilistic AI:contentReference[oaicite:2]{index=2}.

The company’s pbit circuit acts like an electronic coin flipper, generating millions of biased random bits per second using 10,000× less energy than a GPU’s floating-point addition. Higher-order circuits like pdit (categorical sampler), pmode (Gaussian sampler), and pMoG (mixture-of-Gaussians generator) expand the toolkit, enabling full probabilistic models to be implemented natively in silicon. Together, these circuits form the foundation of the TSU architecture — a physical embodiment of energy-based computation:contentReference[oaicite:3]{index=3}.

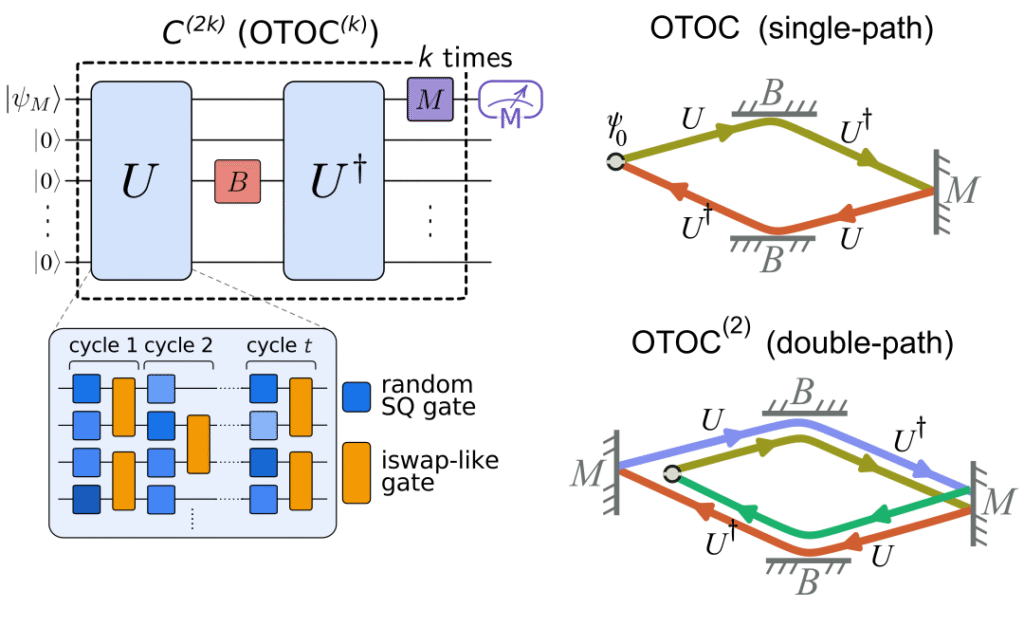

The Denoising Thermodynamic Model (DTM): Diffusion Without the Energy Bill

Hardware alone isn’t enough. Extropic also introduced a new AI algorithm built specifically for TSUs — the Denoising Thermodynamic Model (DTM). Inspired by diffusion models like Stable Diffusion, DTMs chain together multiple energy-based models that gradually denoise data over time. This architecture avoids the “mixing–expressivity trade-off” that plagues traditional EBMs, making them both scalable and efficient:contentReference[oaicite:4]{index=4}.

In simulations, DTMs running on modeled TSUs matched GPU-based diffusion models on image-generation benchmarks like Fashion-MNIST — while consuming roughly one ten-thousandth the energy. That’s the difference between joules and picojoules per image. The company’s open-source library, thrml, lets researchers simulate TSUs today, and even replicate the paper’s results on a GPU before the chips ship.

The Physics of Intelligence: Turning Noise Into Computation

At the heart of thermodynamic computing is a radical idea: computation as a physical relaxation process. Instead of enforcing digital determinism, TSUs let physical systems settle into low-energy configurations that correspond to probable solutions. This isn’t metaphorical — the chips literally use thermal fluctuations to perform Gibbs sampling across energy landscapes defined by machine-learned functions:contentReference[oaicite:5]{index=5}.

In practical terms, it’s like replacing the brute-force precision of a GPU with the subtle statistical behavior of nature itself. Each transistor becomes a tiny particle in a thermodynamic system, collectively simulating the world’s most efficient sampler: reality.

From Lab Demo to Scalable Platform

The XTR-0 kit is already in the hands of select researchers, startups, and tinkerers. Its modular design allows easy upgrades to upcoming chips — like Z-1, Extropic’s first production-scale TSU, which will support complex probabilistic machine learning workloads. Eventually, TSUs will integrate directly with conventional accelerators, possibly as PCIe cards or even hybrid GPU-TSU chips:contentReference[oaicite:6]{index=6}.

Extropic’s roadmap extends beyond AI. Because TSUs efficiently sample from continuous probabilistic systems, they could accelerate simulations in physics, chemistry, and biology — domains that already rely on stochastic processes. The company envisions a world where thermodynamic computing powers climate models, drug discovery, and autonomous reasoning systems, all at a fraction of today’s energy cost.

Breaking the AI Energy Wall

Extropic’s October 2025 announcement comes at a pivotal time. Data centers are facing grid bottlenecks across the U.S., and some companies are building nuclear-adjacent facilities just to keep up with AI demand:contentReference[oaicite:7]{index=7}. With energy costs set to define the next decade of AI, a 10,000× improvement in energy efficiency isn’t just an innovation — it’s a revolution.

If Extropic’s thermodynamic hardware lives up to its promise, it could mark a “zero-to-one” moment for computing — one where the laws of physics, not the limits of silicon, define what’s possible. As the company put it in their launch note: “Once we succeed, energy constraints will no longer limit AI scaling.”

Read the full technical paper on arXiv and explore the official Extropic site for their thermodynamic roadmap.